HIPAA Compliance Checklist for 2025

An employee pastes a customer contract into ChatGPT to summarize key clauses. Another developer uses Claude to debug production code by sharing internal logic. None of this activity is tracked, approved, or logged.

This is the shadow AI governance gap. AI tools are being used in real workflows. However, no one can answer who is using them, what data is being shared, and where that data is going.

In this article, we will break down what shadow AI actually is, why governance is lagging behind adoption, and how enterprises can close the shadow AI governance gap.

TL;DR

- Shadow AI is the unapproved use of AI tools, where sensitive data is shared without governance.

- 63% of enterprises lack a shadow AI policy, leaving usage untracked and uncontrolled.

- The gap exists due to rapid AI adoption, unclear ownership, and lack of visibility into usage.

- Closing the gap requires monitoring AI usage, enforcing policies, and implementing access controls.

- CloudEagle.ai enables full visibility, governance, and continuous control over shadow AI across the enterprise

1. What is Shadow AI? How Is It Different From Shadow IT?

Shadow AI is the use of AI tools without IT or security approval. Employees input company data into tools like ChatGPT or Claude outside governed environments.

On the other hand, shadow IT refers to unapproved software usage but does not involve AI-driven data processing.

- Shadow AI Involves Data Entering AI Systems: Employees paste contracts, code, or reports into AI tools for analysis or generation.

- Shadow IT Involves Unapproved Software Usage: Teams adopt tools without approval, but data typically stays within those systems.

- AI Outputs Influence Decisions And Code: Shadow AI affects business decisions and production code through generated outputs.

This distinction matters because AI changes how data is processed. According to Business Think, 63% of enterprises do not have a formal shadow AI policy. So most AI usage happens without defined controls.

Shadow AI is not just about using unapproved tools. It is about sensitive data being processed and reused by AI systems without governance. Without knowing how to detect shadow AI, things can get messy quickly.

2. Where Does the Shadow AI Governance Gap Already Happening?

The shadow AI governance gap is already happening where employees use AI tools without controls or policies. The shadow AI chat gap isn’t theoretical but specific actions across teams.

Developers Sharing Code With AI Tools

Engineers paste internal code into Claude or ChatGPT to debug issues or generate functions.

Business Teams Uploading Sensitive Documents

Teams upload contracts, financial data, or customer information into AI tools for summarization.

Marketing And Sales Using AI For Content And Insights

Customer data and campaign details are processed through AI tools without approval.

These activities happen outside AI governance frameworks. And most of the time, higher management has no clue.

- No Central Visibility Into AI Usage: Security teams cannot track which tools are being used or what data is shared.

- No Defined Identity And Access Controls: Anyone in the organization can use AI tools without restrictions.

- No Audit Trail For AI Interactions: There is no record of what data was entered or what outputs were generated.

This is fundamentally an identity and access problem. As Charles T. Phillips, Salesforce Operations Manager at UT Health San Antonio, stated in CloudEagle’s Podcast,

“Strong scalable identity governance is only going to work if leadership not only is involved, but they've also got to champion it and embed it into the organization's operations.”

Without leadership-driven governance, AI usage spreads faster than controls. What starts as individual productivity quickly becomes an enterprise-wide risk surface.

3. Why Do 63% of Enterprises Still Lack a Shadow AI Policy?

63% of enterprises lack a shadow AI policy because AI adoption is happening faster. Teams start using tools like Perplexity and Gemini for real work before IT and security teams can set rules.

This means enterprises cannot answer basic questions like: Which AI tools are being used? What data is being shared? Who approved their usage? These are governance gaps, not technology gaps.

A. AI Adoption Is Happening Faster Than Governance Can Catch Up

AI adoption is accelerating because employees can start using tools instantly without approvals. Governance processes, however, require time to define policies, controls, and ownership.

- Instant Access To AI Tools: Employees can start using ChatGPT or Claude without IT involvement.

- No Onboarding Or Approval Workflow: Unlike SaaS tools, AI usage does not go through procurement or security reviews.

- Usage Starts Before Policies Exist: Teams begin using AI for coding, analysis, and content before governance is defined.

This speed mismatch creates a gap where AI usage expands without controls, making governance reactive instead of proactive.

B. No Clear Ownership Between IT, Security, and Compliance Teams

Shadow AI persists because no single team owns it. IT manages tools, security manages risk, and compliance manages policies, but AI usage cuts across all three.

- IT Sees AI As Just Another Tool: Teams focus on access reviews and provisioning, not how data is used inside ChatGPT.

- Security Focuses On Traditional Threats: Controls are built for endpoints and SaaS, not prompt-level data exposure.

- Compliance Lacks Visibility Into Usage: Policies exist, but there is no data showing how AI tools are actually used.

This lack of ownership creates gaps in accountability.

- No Single Team Defines AI Policies: Governance is fragmented across departments.

- No Unified Monitoring Framework: AI usage is not tracked like SaaS applications.

- Delayed Policy Enforcement: Controls are implemented only after shadow AI risks are identified.

According to Early Information Science, a large percentage of AI initiatives fail to scale due to unclear ownership and governance structures.

Without clear ownership, shadow AI continues to grow unchecked, with no team responsible for controlling or monitoring its impact.

C. Shadow AI Feels Invisible Compared to Traditional SaaS Tools

Shadow AI is harder to detect because it does not always require new applications or installations. It happens inside tools employees already use, making it less visible than traditional SaaS adoption.

- No New App Installation Required: Employees access ChatGPT or Claude directly through browsers.

- No Procurement Or Expense Trail: Unlike SaaS tools, AI usage may not appear in invoices or vendor lists.

- Activity Happens Inside Prompts: Sensitive data is shared within prompts, not stored as files or records.

This makes shadow AI difficult to track using traditional SaaS discovery methods. Not only that, enterprises don’t even know how to detect shadow AI.

- No Standard Logging Mechanism: Enterprises often lack visibility into prompt inputs and outputs.

- Usage Blends Into Daily Workflows: AI interactions look like normal browsing or coding activity.

- Difficult To Detect With Existing Tools: Traditional SaaS monitoring tools do not capture AI usage patterns.

Because shadow AI chat operates without clear signals, it remains invisible until a data exposure or compliance issue surfaces.

4. How Can Enterprises Close the Shadow AI Governance Gap?

Enterprises close the shadow AI governance gap by tracking AI usage, controlling what data is shared, and enforcing policies across all teams. Without these controls, AI usage remains invisible and unmanaged.

- Discover And Monitor AI Tool Usage: Identify who is using tools like ChatGPT and Claude across the organization.

- Define Clear Policies For Data Sharing: Specify what data can and cannot be entered into AI prompts.

- Implement Role-Based Access Controls: Restrict who can use AI tools and what systems they can access.

- Enable Logging And Audit Trails For AI Activity: Track prompts, outputs, and usage patterns for compliance and security reviews.

- Align AI Governance With Existing Security Frameworks: Integrate AI controls with identity, compliance, and SaaS governance tools.

5. How Can Enterprises Close the Shadow AI Governance Gap with CloudEagle.ai?

The shadow AI governance gap exists when AI adoption grows faster than visibility, control, and policy enforcement.

CloudEagle.ai closes this gap by connecting discovery, usage, risk, and enforcement into a single control plane, bringing structure and accountability to enterprise AI adoption without slowing teams down.

A: Detecting Shadow AI Across Browser, SSO, and Spend Signals

CloudEagle.ai closes the visibility gap by continuously detecting all AI tools in use, including shadow AI adopted outside IT workflows.

Current Process

Teams rely on fragmented logs from CrowdStrike, Zscaler, SSO systems, and expense reports that are not connected.

Pain Points

Organizations cannot identify which AI tools are in use or how shadow AI spreads. Unapproved tools and duplicate copilots create security, compliance, and procurement blind spots.

How We Do It

CloudEagle correlates browser activity, identity data from Okta, firewall logs, and financial transactions with its SaaSMap AI inventory.

Why We Are Better

Enterprises gain complete, real-time visibility into all AI tools, closing the first and most critical governance gap.

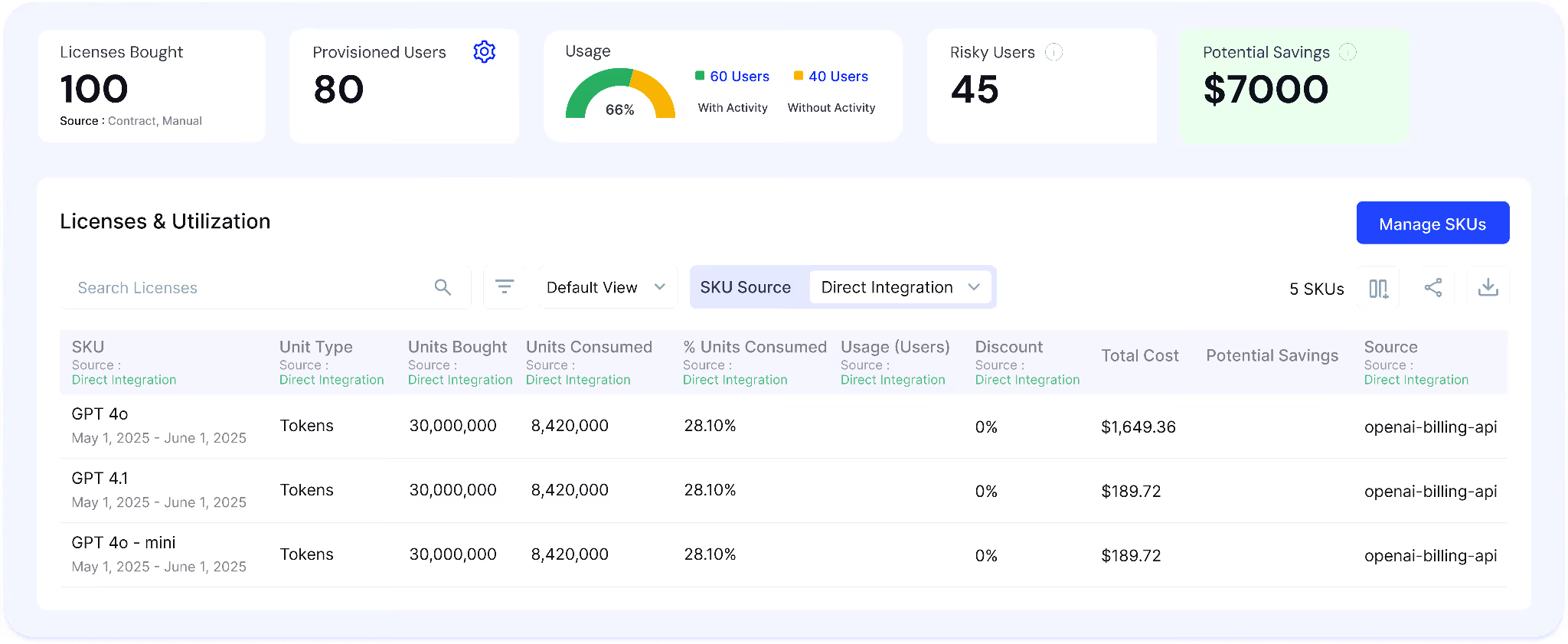

B. AI Usage and Spend Monitoring to Align Adoption with Value

CloudEagle.ai closes the usage gap by providing continuous visibility into how AI tools are used and what they cost.

Current Process

Usage data is scattered across SSO logs, browser activity, app dashboards, and finance systems, with spend visible only after invoices.

Pain Points

Teams cannot determine whether AI tools like Copilot deliver value or should be expanded. Usage-based billing becomes unpredictable, and duplicate tools increase spend.

How We Do It

CloudEagle aggregates usage and spend data across identity systems, browser signals, SaaS integrations, and finance platforms, mapping adoption by user, team, and feature. This extends SaaS governance into AI by correlating usage, value, and cost in one view.

Why We Are Better

Organizations gain a clear understanding of AI adoption and spend, enabling smarter rollout, consolidation, and budgeting decisions.

C. Gen AI Risk Scores to Close the Risk Prioritization Gap

CloudEagle.ai closes the risk gap by assigning Gen AI risk scores to every AI tool based on exposure, usage, and security posture.

Current Process

Security teams evaluate AI tools manually using vendor documentation or ad hoc reviews, often after adoption.

Pain Points

Teams cannot identify which tools process sensitive data or introduce compliance risks. Security efforts are spread across both low-risk and high-risk tools.

How We Do It

CloudEagle applies AI risk scoring using signals from browser activity, identity systems, and integrations like Netskope, evaluating data exposure, training behavior, and vendor posture.

Why We Are Better

Security teams prioritize high-impact risks and focus on AI tools that directly affect compliance and data security.

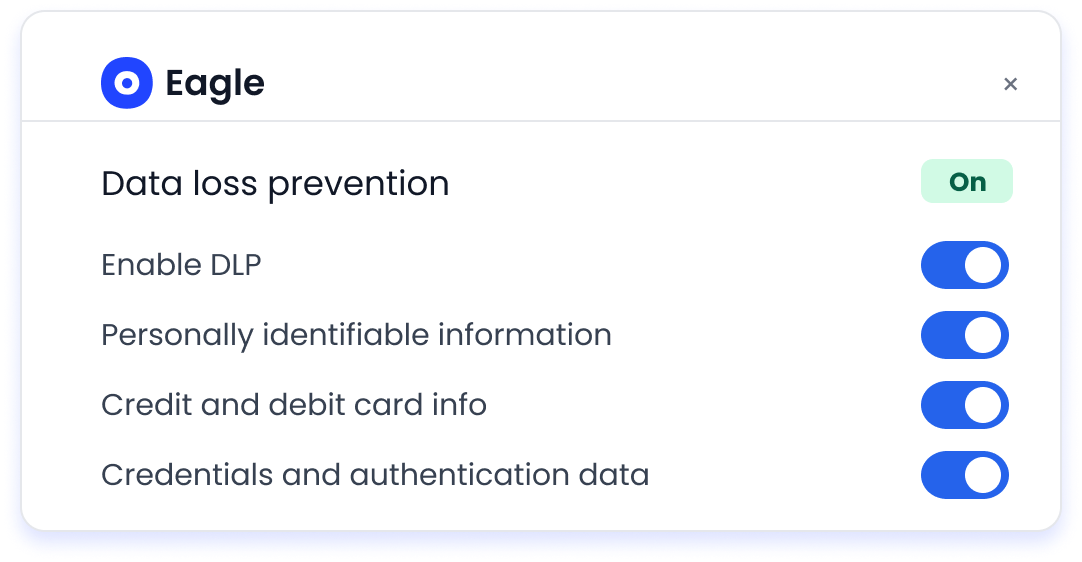

D. DLP to Close the Data Exposure Gap at the Prompt Level

CloudEagle.ai closes the data security gap by monitoring and controlling what is shared with AI tools in real time.

Current Process

Employees input sensitive data into tools like ChatGPT Enterprise, Microsoft Copilot, and Google Gemini through browsers. Traditional data loss prevention and CASB tools cannot inspect prompt-level interactions.

Pain Points

Sensitive data such as PII, financial records, and proprietary information can be exposed without detection. Security teams lack visibility into what data AI vendors process.

How We Do It

CloudEagle inspects AI interactions in real time, detects sensitive data before transmission, and blocks or flags high-risk inputs across both sanctioned and shadow AI tools.

Why We Are Better

Sensitive data is protected at the moment of interaction, reducing exposure and closing a major governance gap.

E. Secure Browser Enforcement with Flash Pages to Close the Policy Gap

CloudEagle.ai closes the enforcement gap by applying AI policies at the moment of user behavior through secure browser controls.

Current Process

Employees access unapproved AI tools directly through browsers, while policies are enforced only after violations are detected.

Pain Points

Shadow AI grows unchecked, and employees are unaware of approved alternatives, leading to policy violations and redundant usage.

How We Do It

CloudEagle deploys a lightweight browser extension that monitors AI access in real time. When a user attempts to access an unapproved AI tool, a flash page intervenes before any data is entered. The flash page:

- Blocks unsafe access

- Redirects users to approved tools

- Enforces policies at the point of behavior

- Educates users on safe AI usage

Why We Are Better

AI policies are enforced instantly, ensuring governance happens before risk is introduced.

6. Conclusion

The shadow AI governance gap exists because AI adoption is happening faster than organizations can govern it. Employees are already using tools like ChatGPT and Claude. But most enterprises still lack visibility into what data is being shared.

This gap shows up in specific actions like pasting customer data into prompts, sharing internal code, or using AI-generated outputs without validation. Without AI governance, these activities remain untracked and unmanaged.

This is where CloudEagle becomes critical. It helps organizations discover AI usage across teams, control access, enforce data-sharing policies, and maintain audit-ready visibility into AI interactions.

Closing the shadow AI governance gap is not about slowing down AI adoption. It is about ensuring that as usage grows, visibility, control, and accountability grow with it.

7. FAQs

What are the 5 pillars of AI governance?

The five common pillars are visibility, policy enforcement, access control, risk monitoring, and auditability. These ensure organizations can track AI usage, control data sharing, and prove compliance with evidence.

What is the 30% rule in AI?

The “30% rule” often refers to the idea that a significant portion of AI-generated output requires human validation. Teams should expect to review and correct AI outputs before using them in production or decision-making.

What is the difference between Gen AI and shadow AI?

Generative AI refers to tools like ChatGPT or Claude that create content, code, or insights. Shadow AI refers to the unapproved or unmonitored use of these tools within an organization.

Is ChatGPT shadow AI?

ChatGPT itself is not shadow AI. It becomes shadow AI when employees use it without approval, governance, or visibility, especially when sharing sensitive data.

%201.svg)

.avif)

.avif)

.avif)

.png)