Your Exposure Score

—

—

Risk Dimension Breakdown

Data Leakage

—

—

Governance

—

—

Compliance

—

—

Executive Summary

—

What this means for your organisation

—

From shadow AI discovery to real-time usage control, ensure every AI tool in use is visible, approved, and aligned with your security and compliance policies.

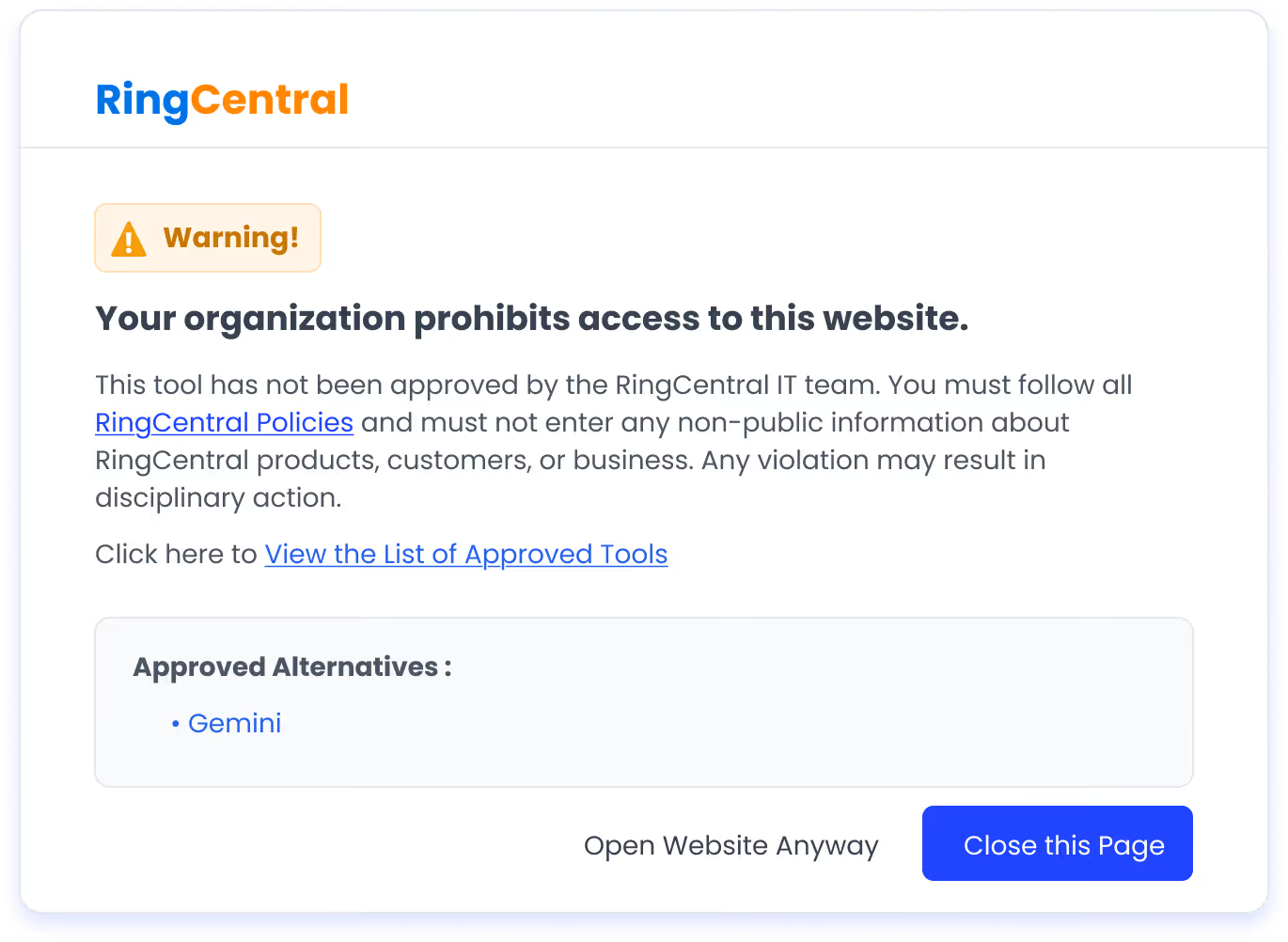

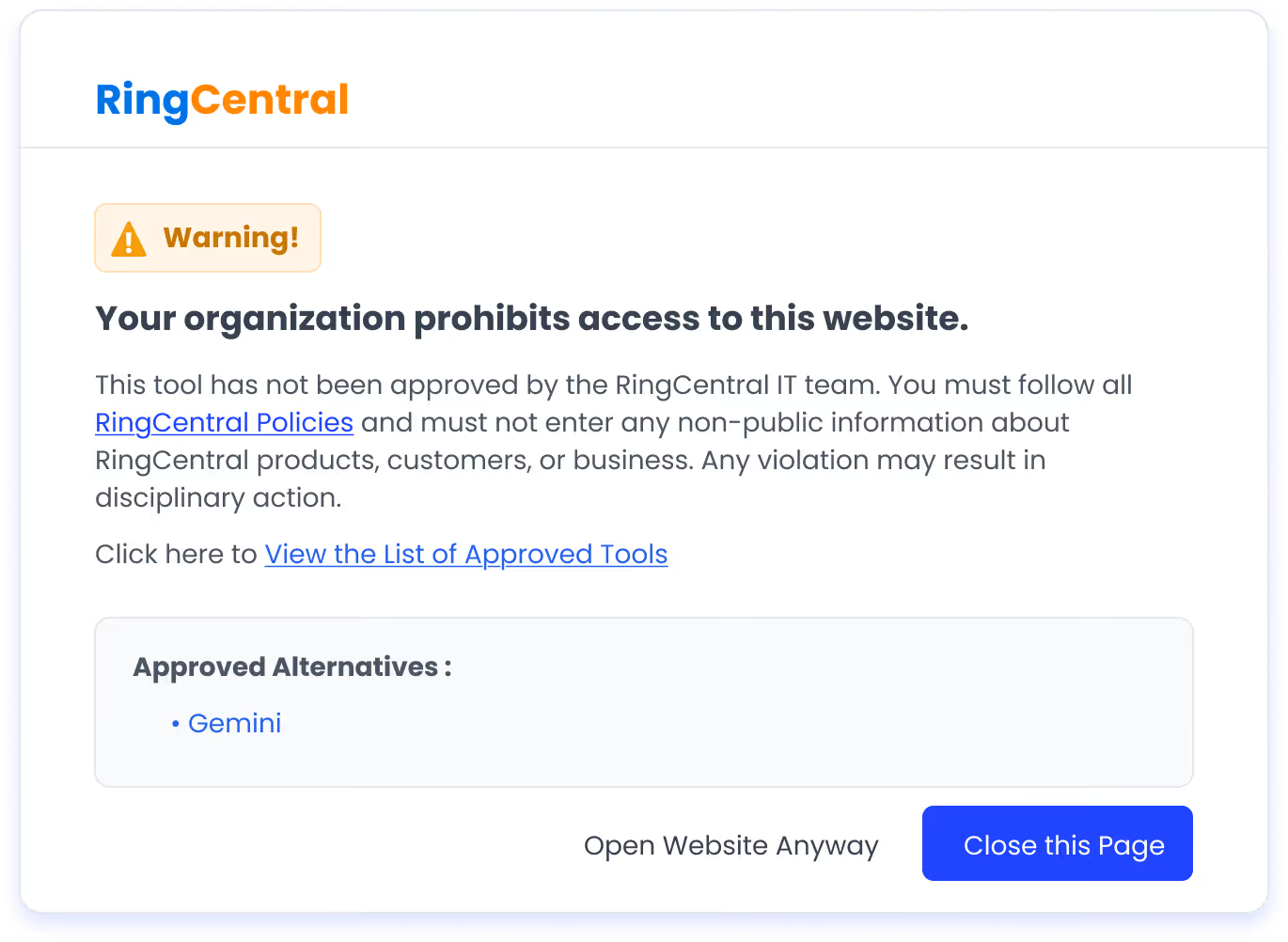

Imagine: Someone on your team just started using a new AI tool. It wasn't approved, nobody reviewed it, and it has access to sensitive company data. Meanwhile, AI features are quietly activating inside tools you already pay for, and nobody knows they're on.

AI governance ensures AI tools are used safely, responsibly, and in line with security, compliance, and risk policies

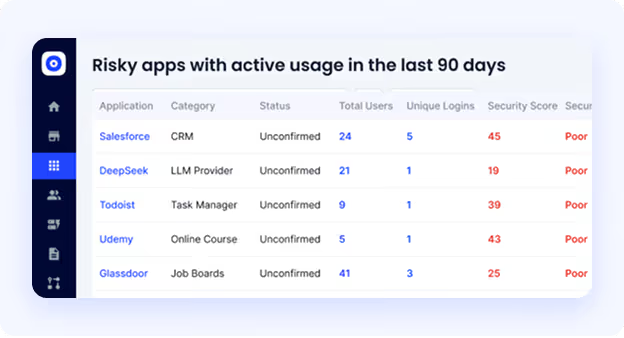

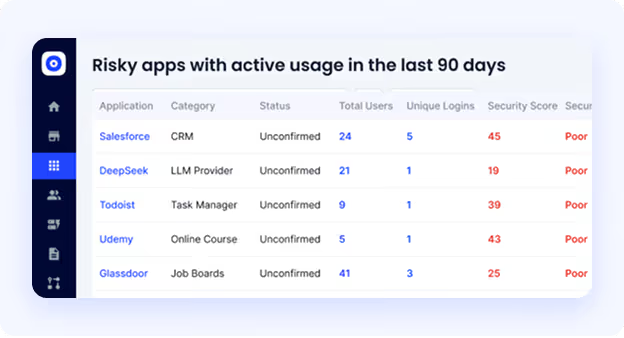

Employees adopt AI tools faster than IT can review them, creating data, identity, and compliance risks.

AI introduces new risks around data usage, model behavior, and identity misuse that require deeper controls.

AI usage control governs how AI tools are accessed, what data they process, and who can use them.

Over-permissioned users and unmanaged identities can expose sensitive data through AI tools.

Yes. Governance enables controlled adoption instead of blocking AI outright.

It provides visibility, policy enforcement, and audit-ready evidence as regulations evolve.

AI features inside approved tools may process sensitive data without explicit visibility or approval.

It unifies AI discovery, usage control, identity risk, and governance into one operational platform.

As soon as AI tools appear in the environment. So governance is most effective when it starts early.