HIPAA Compliance Checklist for 2025

Over 70% of employees now use AI tools without IT approval.

In M&A, that creates a blind spot most deal teams still miss.

During diligence, you review what’s in the data room. But employees are already using tools like ChatGPT or Copilot to process real business data outside of it. None of it is documented, governed, or visible.

That means one critical question often goes unasked:

What AI tools is the target running that you cannot see?

If there’s no clear answer, you are inheriting shadow AI risk on day one.

This blog breaks down where that risk shows up during M&A, what regulators and insurers now expect from AI governance, and a practical checklist to help you identify and control AI exposure before you sign.

TL; DR

- 86% of enterprises now use GenAI across M&A workflows, but governance frameworks are not keeping pace with adoption

- AI in mergers and acquisitions creates three distinct risk layers: what the acquirer brings, what the target brings, and what only surfaces post-close

- AI due diligence in M&A must cover model inventories, training data ownership, regulatory exposure, and shadow AI, not just financials

- Building an AI governance framework for the combined entity needs to start before Day 1, not after integration begins

- CloudEagle.ai helps acquirers discover the full AI and SaaS footprint of a target before the deal closes

1. AI in M&A Has Moved From Experiment to Default. Governance Has Not Kept Up

Here is something that should make every deal team uncomfortable.

86% of enterprises now use GenAI across M&A workflows, per Deloitte's 2025 GenAI in M&A Study. 65% of them started within the past year. McKinsey found that it cuts deal timelines by 10 to 30% and reduces costs by roughly 20%.

Everyone is moving fast. Almost nobody is asking what they are picking up along the way.

86% of Enterprises Use GenAI in M&A Workflows. What Does That Actually Mean for Risk?

GenAI is touching every stage of the deal now:

- 40% of adopters use it for M&A strategy and market assessment

- 35% apply it to target screening and due diligence

- 32% use it in post-deal integration

That last number is the one worth sitting with. Post-deal integration is where AI governance gaps actually become expensive. And at 32% adoption with almost no structured governance behind it, most organizations are inheriting risk they have not even named yet.

Why AI Governance During M&A Gets Deprioritized and What It Costs Post-Close

Deal teams move fast. Governance teams move more slowly. That gap is where the problems pile up.

Here is why AI governance during M&A keeps getting skipped:

- It is not on the standard due diligence checklist

- Legal focuses on IP and contracts, not AI model inventories

- IT gets brought in too late to do a proper audit

- Nobody owns the question of what happens to the target's AI tools post-close

And the cost?

Regulatory fines for undisclosed AI processing. Integration failures from incompatible AI vendors. Shadow AI tools at the target that were never disclosed and are now sitting inside your perimeter.

Most acquirers discover AI tool sprawl and shadow AI only after close, when ungoverned access, orphaned identities, and undisclosed AI vendor contracts have already become their liability.

📖 Worth a Read: Shadow AI is already inside most enterprise environments. Here is how to find every AI tool your organization is running, including the ones IT never approved. 👉 How to Discover Claude Licenses in Your Organization

2. The Three AI Risk Layers Every Acquirer Must Assess

AI in mergers and acquisitions does not create one risk. It creates three. Most deal teams only think about the first one.

Establishing an AI integration post-merger framework before Day 1 is not optional. It is the difference between a clean integration and a six-month cleanup project.

3. AI Due Diligence: What to Audit Before You Sign in Any M&A Deal

AI due diligence in M&A is still not standard practice at most firms. That needs to change. Here is what every deal team should be reviewing before signing.

AI Tool Inventory: Mapping Every Model, API, and AI-Enabled SaaS in the Target's Stack

Ask the target for a complete inventory:

- Every AI tool in use, including free or freemium products

- All API connections to AI vendors, including OpenAI, Anthropic, Google, and Cohere

- Any internally developed models or fine-tuned versions of foundation models

- AI features embedded in standard SaaS tools like Salesforce, Microsoft 365, or Slack

Most targets will not have this list ready. That tells you something important about their governance maturity.

Training Data Provenance and IP Ownership Questions You Must Ask

If the target has built or fine-tuned AI models, you need answers to:

- What data was used to train those models?

- Does the company have the right to use that data for AI training?

- Does any training data include customer PII, third-party content, or proprietary information?

- Who owns the model weights and outputs?

Training data IP issues do not get resolved at close. They follow the combined entity.

Regulatory Compliance Audit: EU AI Act, GDPR, and CFIUS Implications for AI Assets

Generative AI M&A risk now includes a growing regulatory layer:

Regulatory exposure from an undisclosed AI tool is not theoretical. It is a post-close liability the acquirer inherits.

Representation and Warranty Gaps Specific to AI in Mergers and Acquisitions

Standard rep and warranty insurance does not cover AI-specific risks by default. Push for explicit representations covering:

- Accuracy of the AI tool inventory provided in diligence

- Absence of undisclosed AI regulatory violations

- Ownership of training data and model outputs

- No pending AI-related litigation or regulatory inquiries

RWI underwriters are increasingly asking for AI governance documentation as a condition of coverage. A target with no AI governance records is a red flag for everyone in the room.

4. Building an AI Governance Framework for the Combined Entity

Once diligence is done and the deal is moving toward close, the real governance work begins. AI governance during M&A does not end at signing. That is honestly where it starts.

Aligning AI Policies, Acceptable Use Standards, and Model Governance Across Both Orgs

The two organizations almost certainly have different AI policies. Or one of them has no policy at all.

Before Day 1 integration:

- Map both organizations' AI acceptable use policies against each other

- Identify conflicts, gaps, and areas where one policy is significantly weaker

- Draft a unified AI policy for the combined entity that covers all functions

- Define who is accountable for AI governance at the combined org level

Consolidating AI Tool stacks and Eliminating Duplicate or High-Risk AI Vendors Post-Merge

Post-merger is the right moment to rationalize the AI stack. Steps to take in the first 90 days post-close:

- Inventory all AI tools from both organizations in one place

- Identify duplicates where both orgs are paying for the same capability

- Flag high-risk vendors with unfavorable data terms, weak security posture, or regulatory exposure

- Build a consolidated approved AI vendor list and migration timeline

This is also where SaaS spend rationalization matters. Duplicate AI tools mean duplicate contracts, duplicate renewal dates, and duplicate risk.

Establishing a Cross-Functional AI Oversight Body Before Day 1 Integration

AI governance cannot live in IT alone. The combined entity needs a cross-functional AI oversight function:

- IT and Security for tool governance and access controls

- Legal and Compliance for regulatory alignment

- Finance for spend visibility and vendor contracts

- Business leads for acceptable use and function-specific policy

Define this body before Day 1. Do not wait for an incident to create accountability.

Heard how real enterprises are rethinking AI governance from the ground up? This is worth 20 minutes of your time.

🎙️ Podcast: How AI-Driven Innovation Meets Real-World Governance: A Blueprint for CIOs and CTOs. Real talk on what enterprise AI governance looks like when it has to survive a merger. 👉 Listen now

5. Shadow AI in M&A: The Risk Nobody Puts in the Data Room

This is the part of AI in M&A that most deal teams still miss. It is also where the biggest post-close surprises come from.

How Shadow AI Actually Shows Up in a Deal

Employees at the target are already using AI tools. Most were never approved. None of them appear in diligence unless you actively look.

Common scenarios:

- Sales teams using ChatGPT to draft customer responses

- Engineers running AI coding tools on internal repositories

- HR is adopting AI screening tools without procurement review

- Finance teams connecting AI analytics tools to production data

67% of M&A respondents cite data security as a top GenAI concern.

Shadow AI is exactly where that risk hides.

The problem is not just usage.

It is unseen, uncontrolled AI interacting with sensitive data before you even close the deal.

How CloudEagle.ai Helps Acquirers Discover the Full AI and SaaS Footprint Before Close

CloudEagle.ai is an AI-powered SaaS Management, Security, and Identity Governance platform that surfaces every AI tool and SaaS application, including shadow AI that IT never sanctioned, giving acquirers a unified command center for discovery, governance, and risk assessment before Day 1.

For acquirers doing AI due diligence in M&A, CloudEagle provides:

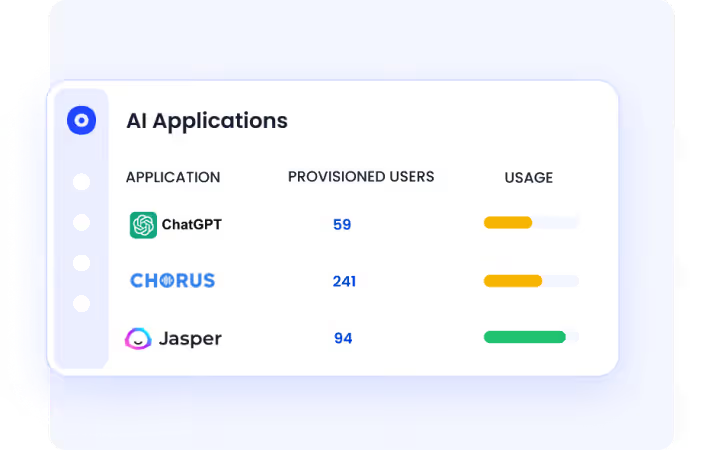

1. Discover Shadow AI Across the Target Environment

CloudEagle surfaces every AI tool in use, including unsanctioned ones, by correlating signals across SSO, browser activity, security tools, and finance systems.

You don’t just get a list of apps.

You see who is using what AI tool, in which team, and how frequently.

Why it matters:

You cannot diligence AI risk if you cannot see it. This gives you a complete AI footprint before close.

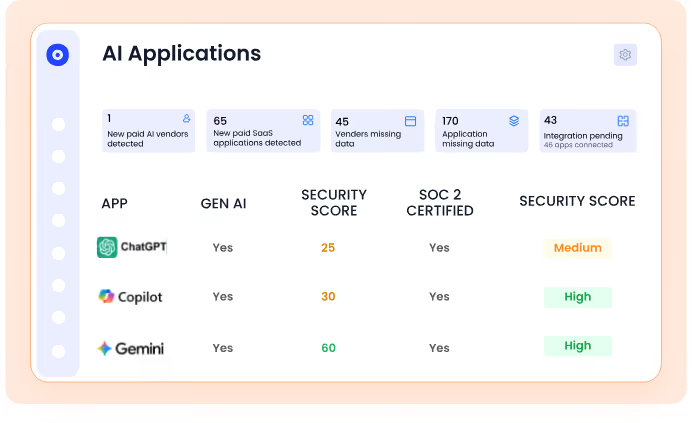

2. Assign Risk Scores to Every AI Tool in Use

Not all AI tools carry the same risk.

CloudEagle applies AI risk scoring based on security posture, data exposure potential, and usage patterns, helping you identify:

- High-risk tools touching sensitive data

- Unreviewed tools with growing adoption

- Low-value or redundant AI usage

Why it matters:

You move from raw discovery to risk-prioritized diligence, focusing only on what can impact compliance, data security, and valuation.

3. Control AI Usage in Real Time With Policy Enforcement

Discovery alone is not governance.

CloudEagle enforces AI usage policies at the point of behavior, ensuring employees only use approved tools aligned with your security standards.

- Maintain a centralized list of approved AI tools

- Monitor usage continuously across teams

- Detect when sensitive data is being shared with AI tools

Why it matters:

You are not just observing AI usage. You are actively controlling it before it becomes a compliance issue.

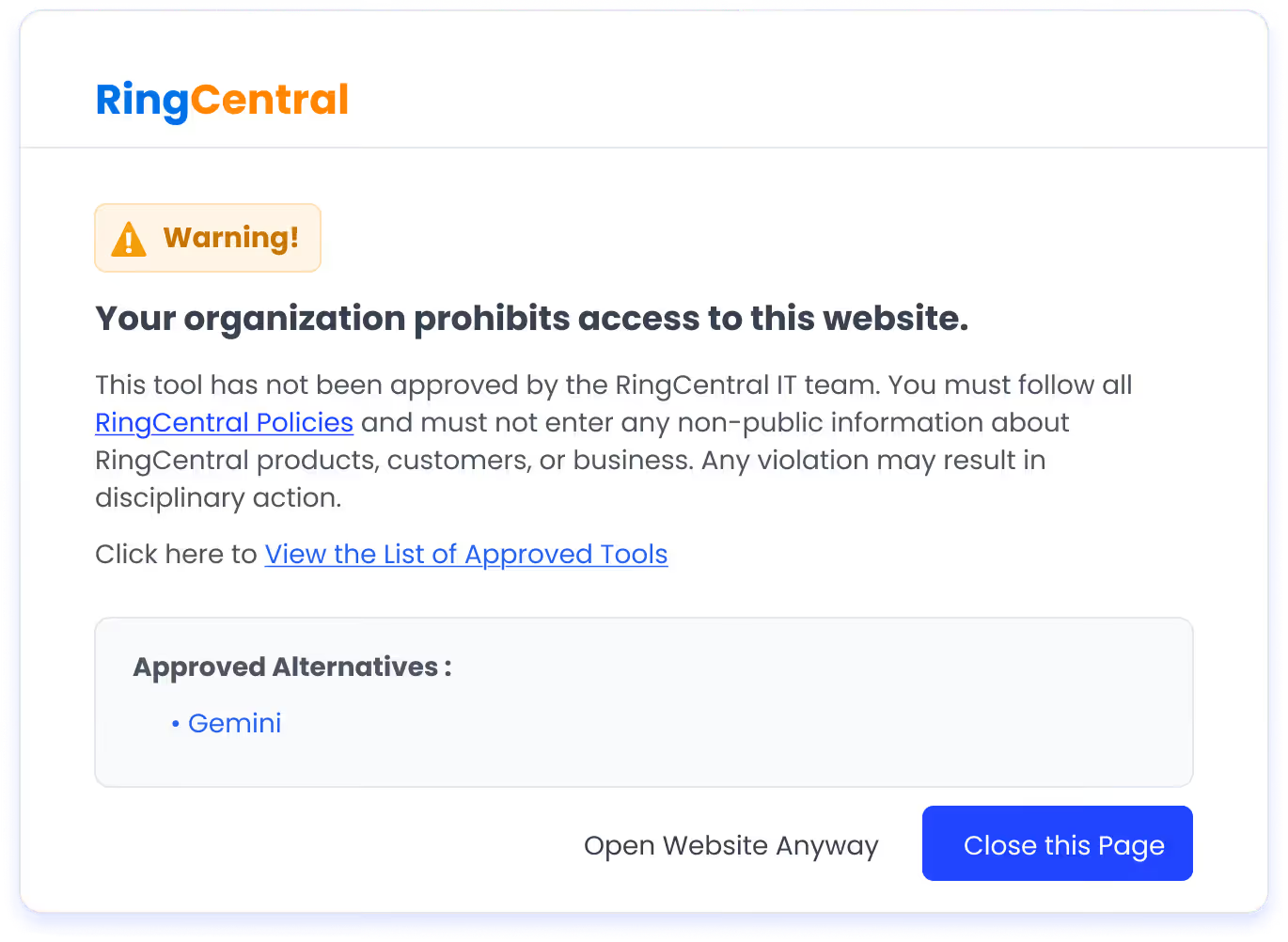

4. Redirect Unsafe Behavior With Real-Time Flash Pages

When an employee tries to access an unapproved AI tool, CloudEagle triggers a real-time flash page.

Instead of blocking productivity, it:

- Educates users on approved AI policies

- Redirects them to sanctioned alternatives

- Creates an audit trail of every intervention

Why it matters:

This is critical during M&A transitions where behavior is already established. You can correct usage instantly without slowing teams down.

5. Prevent AI Risk From Turning Into Day 1 Liability

Unmanaged AI usage leads to:

- Data leakage through prompts

- Regulatory exposure (GDPR, CCPA)

- Orphaned access and API risks

- Audit failures post-close

CloudEagle continuously monitors AI usage and ensures every interaction is logged, governed, and audit-ready.

Why it matters:

You walk into Day 1 with defensible AI governance, not hidden exposure.

6. AI Governance During M&A Is Now a Board-Level Requirement

This is not just an IT conversation anymore. Regulators, insurers, and boards are all paying attention to AI governance during M&A.

What Regulators, Insurers, and RWI Underwriters Now Expect From AI Governance in Deals

A Practical AI Governance Checklist for M&A Teams in 2026

Pre-diligence:

- Audit your own AI and SaaS stack before engaging with the target

- Define what AI disclosures you will require in the data room

During diligence:

- Request a complete AI tool inventory from the target

- Review training data provenance and IP ownership

- Run regulatory exposure assessment across the EU AI Act, GDPR, and CFIUS

- Identify shadow AI through SSO logs, expense reports, and endpoint data

Pre-close:

- Draft unified AI acceptable use policy for the combined entity

- Define the AI oversight body and assign accountability

- Map duplicate AI vendors and build a rationalization timeline

- Ensure AI-specific reps and warranties are in the deal documents

Post-close, first 90 days:

- Execute AI stack consolidation plan

- Migrate or terminate high-risk or duplicate AI vendors

- Roll out a unified AI policy across both organizations

- Establish continuous AI tool monitoring for the combined entity

Final Words

Most deal teams review financials, contracts, and operational risks. AI governance during M&A rarely makes that list. It should.

Start with visibility into what AI is running, assess the risks, and establish governance before Day 1. It is far easier than fixing gaps post-close.

CloudEagle.ai gives you full visibility into AI and SaaS usage, helping you uncover shadow AI, assess risks, and stay compliant before Day 1.

Book a demo with CloudEagle.ai and make AI governance a core part of your M&A strategy, not an afterthought.

Frequently Asked Questions

- What is AI governance during M&A?

AI governance during M&A is the process of identifying, auditing, and managing AI-related risks across both the acquirer and target organizations throughout the deal lifecycle.

- What are the biggest AI risks in mergers and acquisitions?

The biggest AI risks in mergers and acquisitions include undisclosed shadow AI usage at the target company, training data IP ownership issues, regulatory exposure under the EU AI Act, and GDPR.

- What should AI due diligence in M&A cover?

A full inventory of AI tools, models, and APIs, plus training data provenance, IP ownership, regulatory compliance (EU AI Act, GDPR), shadow AI detection, and clear AI clauses in deal documents.

- How does shadow AI create risk in M&A deals?

Shadow AI includes unapproved tools that don’t appear in the data room but transfer at close, creating hidden security, compliance, and integration risks.

- When should AI governance be established for the combined entity?

Before Day 1, with unified policies, cross-functional oversight, and a plan to rationalize duplicate or high-risk AI tools.

%201.svg)

.avif)

.avif)

.avif)

.png)