HIPAA Compliance Checklist for 2025

Your Berlin office has been using an AI-powered HR screening tool for six months. The vendor assured you it was compliant. Security never catalogued it. IT never ran a risk assessment. Candidates were never informed. That is three separate compliance violations under the EU AI Act, with fines up to €15 million or 3% of global turnover.

EU AI Act and SaaS governance have collided into a compliance surface most enterprises are not prepared for. Full deployer obligations land on August 2, 2026, and according to a Center for Data Innovation survey from late 2025, fewer than 30% of European SMEs have taken any steps toward compliance.

If your organisation uses AI-powered SaaS, you are already a deployer. Legal obligations are live. Here is what that means, and what you need to do before enforcement begins.

TL;DR

- Most enterprises are already "deployers" under the EU AI Act the moment they use SaaS tools with AI components, even if they did not build the underlying model.

- The August 2, 2026, deadline is the critical enforcement date for high-risk AI obligations and transparency requirements, regardless of proposed extensions.

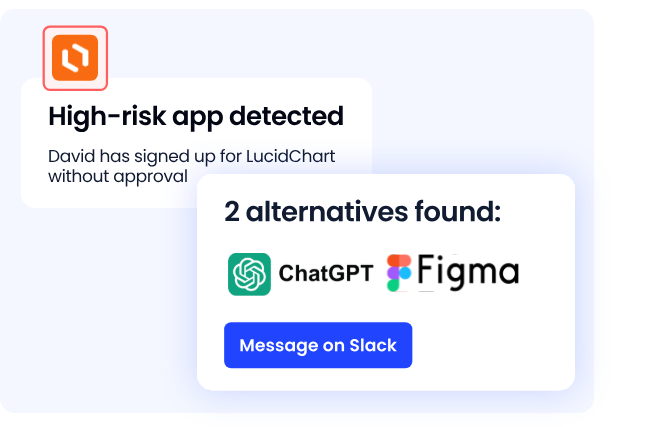

- EU AI Act and SaaS governance intersect most dangerously through shadow AI: employees adopting unsanctioned AI tools that never get classified, documented, or monitored.

- Compliance requires an AI system inventory, risk classification, vendor documentation, and human oversight, none of which are possible without SaaS-level visibility.

- The governance infrastructure you need for the EU AI Act is the same one you need to control sprawl, access risk, and audit readiness across your SaaS stack.

1. What the EU AI Act Actually Says About SaaS?

The EU AI Act classifies every organisation that uses AI professionally as an operator, and assigns compliance obligations based on their role in the supply chain.

For most enterprise buyers, that role is "deployer."

A deployer is any organisation that uses an AI system professionally and did not build the underlying model. Subscribe to an AI-powered ATS? Deployer. Running Microsoft Copilot? Deployer. Integrated any LLM API into an internal workflow? Also a deployer.

Using a simple SaaS tool does not exempt you from these responsibilities. The regulation is explicit on this.

The Act structures obligations around four risk tiers. Where your SaaS tools land determines everything: your documentation requirements, your oversight obligations, and your exposure to fines.

The Cloud Security Alliance flagged in March 2026 that enterprises may have deployed high-risk systems without recognising their classification. An AI tool ranking job applicants? High-risk. A chatbot rejecting candidates without human review? Transparency violation. Most SaaS teams have no process to catch either.

Also Read: AI Governance Policies and Controls: A Quick Guide for Enterprise Teams:

2. The Enforcement Timeline: What Has Already Kicked In

Several EU AI Act deadlines have already passed. If your organisation uses AI-powered SaaS, at least one obligation applies to you right now.

Most enterprise teams are tracking August 2026 and assuming they have runway. They do not.

⚠️ Do Not Bank on the Extension. The European Commission proposed a "Digital Omnibus" package in late 2025 that could push some Annex III deadlines to December 2027. As of late March 2026, the trilogue has not begun, and the extension has not been enacted. If the Omnibus is not adopted before August 2026, the original timeline applies. Treat August 2, 2026, as fixed.

3. The Shadow AI Problem Is the Compliance Surface Nobody Is Measuring

The EU AI Act requires a documented inventory of every AI system your organisation deploys, including purpose, data, populations affected, and risk classification.

The problem is that most enterprises cannot produce one. Over 60% of AI and SaaS applications operate outside IT visibility, and a Microsoft survey found that more than half of employees who use AI tools at work do so without ever telling IT.

You cannot classify what you cannot see. You cannot retain six months of logs from a free trial that nobody registered for. Shadow AI makes the EU AI Act and SaaS governance structurally impossible at scale, and it is the gap that regulators will find first.

4. What Deployer Obligations Actually Look Like in Practice

High-risk AI deployers have a specific set of obligations under the regulation. Here is what they require in practice:

- AI system inventory: A living register of every AI tool in use, vendor, purpose, data processed, and populations affected.

- Genuine human oversight: High-risk AI decisions must be reviewable and correctable by a qualified human. A vendor checkbox does not count.

- Log retention, 6 months minimum: Automatically generated system logs must be stored and retrievable for regulatory review.

- Stay within provider instructions: Repurposing a tool beyond its documented scope is a compliance liability.

- Inform affected individuals: Anyone whose employment, credit, or similar decision involved high-risk AI must be told.

- FRIA where required: Public bodies and essential service providers must complete a Fundamental Rights Impact Assessment before first use.

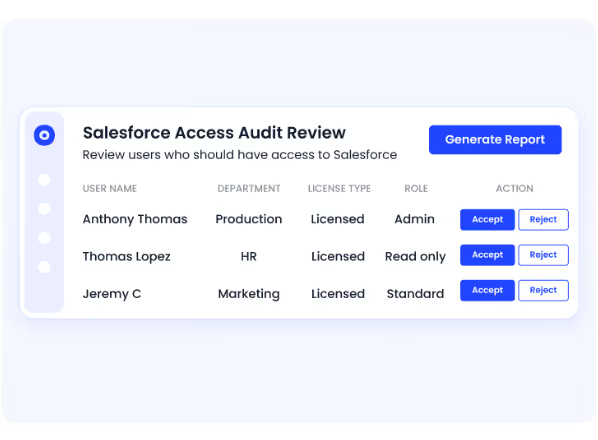

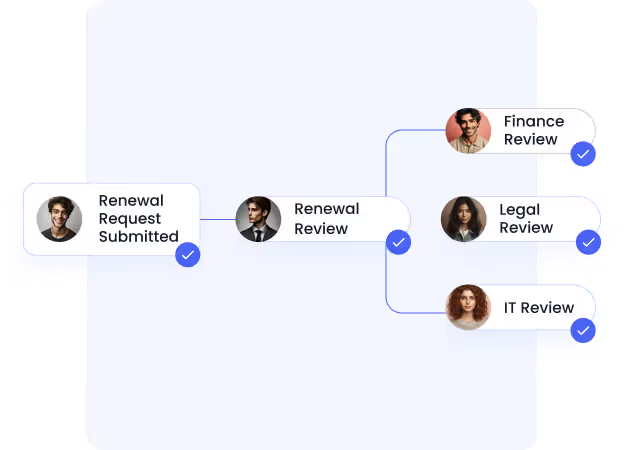

- Vendor compliance verification: Confirm conformity assessment status, EU AI database registration, and technical documentation before signing or renewing.

Key Principle: For limited-risk AI: chatbots, generative tools, and AI-generated content: Article 50 transparency obligations apply from August 2, 2026. Any system that interacts directly with people must disclose its AI nature. New deployments after that date must comply immediately.

5. The GDPR Overlap: Why You Need Both Frameworks Talking to Each Other

For any AI system processing personal data, GDPR and the EU AI Act both apply. GDPR governs the data. The EU AI Act governs the system. They do not duplicate; they stack.

A single AI-powered HR tool can trigger both simultaneously. GDPR fines cap at €20 million or 4% of global turnover; EU AI Act penalties add another €15 million or 3%. These are additive risks.

Security and privacy cannot be owned in separate workstreams. The AI tool triggering your GDPR review is the same one requiring a high-risk AI system risk assessment. The EU AI Act and SaaS governance have to be a unified function.

Also Read: Embedded AI Governance: The Blind Spot in M365, Salesforce and Google know how AI embedded inside your existing SaaS tools creates compliance exposure you may not even know to look for.

6. Who Owns This Internally? The AI Officer Question

EU AI Act and SaaS governance require a single accountable owner. Right now, in most enterprises, that accountability is fragmented across CIO, CISO, and procurement, with no one owning the AI system inventory.

The AI Officer does not need to be a new hire.

In most organisations, it is the CISO or CAIO expanding the scope.

What matters: explicit authority to classify AI systems, enforce vendor compliance in procurement, maintain the audit trail, and report high-risk AI incidents to National Competent Authorities.

That reporting structure needs to exist before August 2, 2026, not after the first notice arrives.

7. Getting Operationally Ready: Four Things to Action Before August 2

The deployer obligations above tell you what the law requires. This is about the operational gaps most enterprises still have not closed, the ones that make those obligations undeliverable.

- Discover your shadow AI first: You cannot inventory what you cannot see. Integrate with SSO, finance feeds, and network traffic to surface every tool in use, sanctioned or not. This is the prerequisite for everything else.

- Classify every AI tool against Annex III: Work through your inventory and assign a risk tier to each tool. When uncertain, classify higher. Employment, credit, education, and biometrics are the highest-priority targets and the most commonly misclassified.

- Audit Article 50 transparency across user-facing AI: Every chatbot, AI assistant, and AI-generated content tool deployed to customers or employees must disclose its AI nature from August 2. Most enterprises have not completed this audit.

- Assign internal ownership before enforcement begins: Compliance without an accountable owner defaults to nobody. Designate who classifies systems, who owns vendor due diligence, and who holds the incident reporting mandate. Do this before the deadline, not as a response to it.

8. How CloudEagle.ai Closes the EU AI Act Compliance Gap for SaaS-Heavy Enterprises

EU AI Act compliance is a SaaS sprawl problem. You cannot maintain inventories, oversight mechanisms, logs, and vendor documentation when AI tools are being adopted faster than governance can follow.

CloudEagle gives IT, Security, Finance, and Compliance teams a single command centre to discover, classify, govern, and audit every AI and SaaS application, including those nobody approved.

- Shadow AI Discovery: The EU AI Act requires a complete, continuously updated inventory, which is impossible without knowing what tools exist. CloudEagle surfaces every AI tool in use by integrating with SSO, browser activity, financial feeds, and network traffic, and scoring them by risk level and vendor compliance status.

Outcome: the foundational inventory that every EU AI Act and SaaS governance obligation depends on.

- AI Risk Classification and Scoring: Manual Annex III classification falls apart the moment a new tool enters the stack. CloudEagle enriches discovered applications with risk context, vendor details, and contract data, enabling continuous classification.

Outcome: a risk-aware AI estate with current, auditable data.

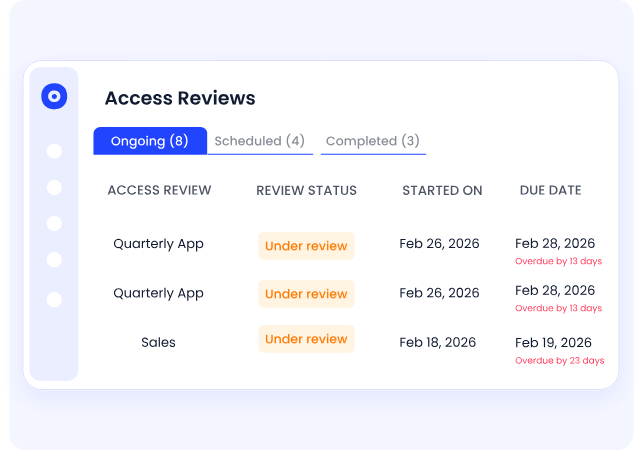

- Access Governance and Human Oversight: High-risk AI deployer obligations require controlled, reviewable access for every high-risk AI system. CloudEagle automates access reviews, enforces role-based controls, flags excessive privileges, and generates compliance-ready audit reports.

Outcome: continuous governance that satisfies EU AI Act and GDPR obligations simultaneously.

- Automated Log Retention and Audit Readiness: High-risk AI systems require six months of automated logs. CloudEagle generates and maintains audit trails continuously, with no manual collection or scrambling before review.

Outcome: timestamped evidence ready for National Competent Authority inquiry, any day.

- Vendor Compliance Verification: Most procurement teams have no structured process to track whether AI vendors have met provider obligations. CloudEagle's vendor workflows flag renewals where AI compliance documentation is missing.

Outcome: vendor due diligence becomes a standard checkpoint, not a last-minute scramble.

9. The Governance Infrastructure You Build for Compliance Will Outlast the Deadline

Most enterprises are treating the EU AI Act and SaaS governance like a one-time exercise. Build an inventory. Classify. Update contracts. Move on. That gets you through an audit, not through what comes after it.

The AI landscape does not pause between compliance cycles. New tools enter the stack. Vendors update systems and shift risk tiers. Enforcement escalates. The tools your employees are using in August 2027 will look nothing like the ones in your current inventory.

The question is not whether you will be compliant by August 2, 2026. It is whether the governance you build now will still be working when the next wave hits.

10. FAQs

Does the EU AI Act apply to companies outside the EU?

Yes. Any organisation whose AI systems are used in the EU or affect EU residents must comply, regardless of where the company is headquartered.

What is the difference between an AI provider and a deployer under the EU AI Act?

Providers build and sell AI systems. Deployers use third-party AI professionally. Most enterprise SaaS buyers are deployers, with lighter but still enforceable obligations.

What are the penalties for non-compliance with the EU AI Act?

Up to €35 million or 7% of global turnover for prohibited practices. High-risk violations carry up to €15 million or 3%. SMEs receive reduced caps.

What is a Fundamental Rights Impact Assessment (FRIA) and who needs to conduct one?

A pre-deployment assessment of a high-risk AI system's impact on fundamental rights. Mandatory for public bodies and essential service providers before first use.

How does the EU AI Act interact with existing SaaS vendor agreements?

Most existing contracts lack required AI clauses. Ask vendors for conformity assessment status, EU database registration, and compliance warranties before renewing.

Can shadow AI tools create EU AI Act liability?

Yes. Deployers are responsible for all AI used professionally in their organisation, whether IT approved it or not. Unsanctioned tools carry the same obligations as procured ones.

.avif)

%201.svg)

.avif)

.avif)

.avif)

.png)