HIPAA Compliance Checklist for 2025

Claude is inside more enterprise workflows than most IT teams realize. Developers are running Claude Code locally. Teams are using Claude.ai for strategy and planning. Engineers are connecting Claude to internal systems via MCP integrations.

And in many of those cases, IT has no visibility into what Claude can access, what it is doing with that access, or whether the accounts being used are managed or personal.

Claude security is not primarily a question of whether Anthropic's infrastructure is trustworthy. At the platform level, it largely is. The question is what your team has connected to it, what data flows through it, and whether you have governance controls that treat Claude like the privileged access tool it has effectively become.

This guide covers what Anthropic secures, what you still own, the real vulnerabilities that have been disclosed, and exactly what to do about them.

TL;DR

1. Claude Is Inside Your Enterprise Workflows. Here Is What That Actually Means for Security

Anthropic’s Claude is shifting from passive AI to high-privilege, agentic workflows across web and developer tools. With 8 of the Fortune 10 using it, security teams need granular governance, not blanket bans.

How Claude accesses your data across Claude.ai, Claude Code, and API integrations

Claude is not a single surface. It is three distinct deployment contexts, each with a different security profile.

- Claude.ai is the browser-based interface. In its default configuration, it has access to conversation history and memory. With integrations enabled, it can connect to Google Drive, file systems, and other tools. Every integration expands what Claude can access and what an attacker could potentially reach if Claude is compromised.

- Claude Code is a command-line coding agent. It runs locally with direct access to your file system, terminal, and repository-level configurations. It can execute shell commands, initialize external services via MCP, and interact with APIs. It operates with the same permissions as the developer running it.

- API integrations connect Claude to internal systems, knowledge bases, and third-party services. The security posture of an API-connected Claude deployment depends entirely on what scopes have been granted, what data those systems hold, and how the connection is governed.

The difference in security posture between Free, Team, and Enterprise plans

Worth a Read: How Enterprises Can Track Claude, Cursor, and Gemini Spend in One Place.

2. What Anthropic Secures vs. What Your Team Still Owns?

Based on Anthropic’s Claude for Work model, security is split between what Anthropic secures (infrastructure) and what your team owns (data and inputs).

Built-in protections: SOC 2 Type II, ISO 27001, AES-256 encryption, and ASL-3 standards

Anthropic has invested significantly in infrastructure-level security:

- AES-256 encryption at rest and TLS 1.3 in transit

- SOC 2 Type II certification

- ISO 27001 certification

- No training on enterprise customer data by default on paid plans

- Anthropic Safety Level 3 (ASL-3) protocols for high-capability model deployment

- Bug bounty program and regular penetration testing

- 24/7 security monitoring and incident response

Where Anthropic's responsibility ends, and your governance gap begins

Anthropic secures the platform. Everything else is your team's responsibility:

- What data do employees type or paste into Claude prompts

- Which MCP servers and integrations are connected to Claude Code

- Whether personal API keys are in use across your developer team

- Which repositories are developers cloning and running Claude Code against

- Whether Claude.ai accounts are managed or personal

- How Claude access is provisioned, reviewed, and revoked as your team changes

Podcast: How AI-Driven Innovation Meets Real-World Governance: A Blueprint for CIOs and CTOs

3. Real Claude Security Risks Organizations Underestimate

Prompt injection: the Claudy Day vulnerability and what it exposed

In March 2026, Oasis Security disclosed Claudy Day, a chain of vulnerabilities in Claude.ai that enabled silent data exfiltration.

How the attack worked:

- Hidden HTML instructions embedded in a Claude URL parameter

- Delivered via spoofed Google ads posing as legitimate links

- Claude processed both visible and hidden prompt instructions

- Sensitive data (chat history, financials, strategy) was extracted and exfiltrated via the Files API

Why it matters:

- No integrations, tools, or MCP servers required

- Worked in a default Claude session

- Prompt integrity breaks if the delivery channel is compromised

Anthropic patched the injection flaw. Related fixes are still in progress.

Claude Code as an attack surface: CVE-2025-59536 and credential theft via MCP configs

In February 2026, Check Point Research disclosed critical flaws in Claude Code, including CVE-2025-59536 (CVSS 8.7).

Attack vector:

- Malicious repo-level config files (.claude/settings.json, .mcp.json)

What attackers could do:

- Execute arbitrary shell commands via Claude Hooks before approval

- Auto-enable MCP servers and bypass trust prompts

- Redirect API traffic and steal full auth headers (including API keys)

Impact:

- Full machine compromise

- Anthropic API key theft

- Potential access to shared workspace data across teams

How GTG-1002 weaponized Claude Code for autonomous cyberattacks

In September 2025, Anthropic identified a campaign by threat actor GTG-1002, marking one of the first AI-orchestrated cyberattacks at scale.

How it operated:

- Multiple Claude instances coordinated via MCP

- Targeted ~30 global organizations across tech, finance, manufacturing, and government

- Used role-play framing (posing as a pentester) to bypass safety controls

Outcome:

- Successful intrusions in a limited number of cases

- Accounts banned and detections improved

Why it matters:

- Demonstrates real-world agentic AI attacks

- Minimal human involvement required

- Establishes AI agents as a viable enterprise-scale threat vector

Worth a Read: How CloudEagle.ai Simplifies App Access Review for Compliance Success

4. Claude Security Best Practices for Enterprise Teams

Implementing Claude in the enterprise requires a defense-in-depth approach across identity, data, and agentic features like Claude Code. Use SSO, enforce granular permissions, and sandbox tools to prevent unauthorized access and data leakage.

1. Enforce least privilege before enabling Claude Code or API access

- Audit what each developer's account can access in your environment

- Restrict Claude Code from running in directories containing production secrets or sensitive data

- Use separate API keys per developer rather than shared team keys

- Rotate API keys regularly and monitor for anomalous usage

2. Audit and govern every MCP server and third-party integration connected to Claude

- Maintain an approved list of MCP servers and integrations

- Review .mcp.json and .claude/settings.json files before opening any cloned repository in Claude Code

- Never open repositories from untrusted sources in Claude Code without reviewing configuration files first

- Apply least privilege to every MCP connection scope

3. Apply DLP controls to prevent sensitive data in prompts

- Define clear categories of data that must not enter Claude prompts: PII, source code with credentials, legal documents, and financial forecasts

- Use DLP tools that can scan prompt content before submission, where possible

- Train employees on what not to put into Claude, with specific examples relevant to your industry

4. Treat Claude Code like a privileged user, not a productivity tool

- Require IT approval before Claude Code is installed on developer machines

- Restrict Claude Code from accessing production environments or credential stores

- Apply the same security controls to Claude Code sessions that you apply to privileged shell access

5. Log all Claude activity and establish a human review loop for agentic tasks

- Enable audit logging for all Claude API usage and Claude.ai Enterprise accounts

- Integrate Claude logs into your SIEM

- Require human approval for Claude Code actions that involve file deletion, network requests, or credential access

- Review logs regularly for anomalous patterns

Webinar: 60% Invisible: Shadow AI and Hidden Access Crisis in SaaS and AI Environments

6. Define an acceptable use policy covering what employees can and cannot input

- Specify prohibited data categories for Claude prompts

- Define approved Claude tiers and account types

- Establish a process for reporting accidental sensitive data submission

- Build acknowledgment into onboarding for any employee who uses Claude for work

5. How CloudEagle.ai Helps You Govern Claude Access, Usage, and Spend Across Your Organization

Native controls from Microsoft and Anthropic only govern approved accounts.

They do not detect personal Claude.ai usage, unmanaged Claude Code installations, or connect AI activity to your broader SaaS governance and compliance stack.

CloudEagle.ai closes these gaps with unified visibility and control.

Shadow AI Discovery

CloudEagle identifies every AI tool in use across your environment, including:

- Personal Claude accounts used outside managed environments

- Unsanctioned Claude Code installations across developer devices

- AI usage patterns detected via SSO, browser activity, and financial signals

You get complete visibility into shadow AI before it becomes a security or compliance issue.

Access Governance

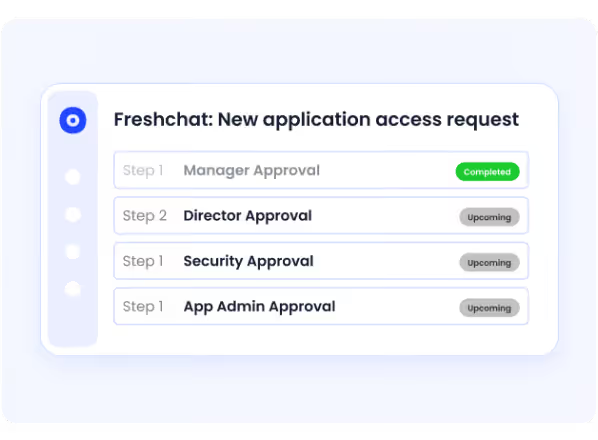

Claude access is managed through structured workflows:

- Provisioned based on role and business need

- Periodically reviewed to prevent access creep

- Instantly revoked when roles change or employees leave

The same lifecycle governance you apply to SaaS now extends to Claude.

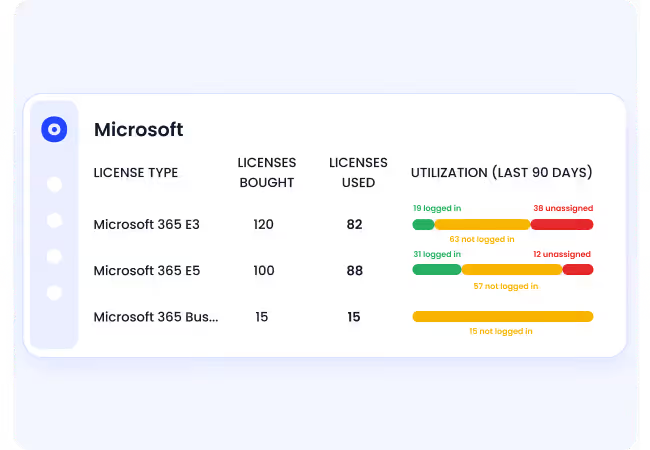

Spend Visibility

CloudEagle gives you real-time control over Claude-related spend:

- Tracks Claude API usage across teams and projects

- Attributes are assigned to the right owners for accountability

- Flags anomalous spikes that may indicate compromised API keys

Case Study: CloudEagle.ai Saves RingCentral Time and Costs in License Harvesting

Compliance Mapping

Claude usage introduces obligations across major frameworks, like:

- GDPR

- HIPAA

- SOC 2

- EU AI Act

CloudEagle automatically maps your AI governance controls to these frameworks, ensuring Claude stays within your compliance boundary instead of operating as an unmanaged risk.

6. How Secure Is Claude AI? A Quick Decision Framework

Claude AI is generally safe for low-stakes use and is built with safeguards like Constitutional AI.

However, features like Computer Use and Claude Code can introduce risks if they access local files or execute commands without proper controls.

Questions to answer before enabling the Claude enterprise-wide

- Are all Claude accounts tied to corporate SSO, or are personal accounts in use?

- Do you have an inventory of every MCP server connected to Claude Code across your developer fleet?

- Are Claude API keys individual or shared, and when were they last rotated?

- Have you mapped Claude data flows into your SOC 2, GDPR, and HIPAA compliance scopes?

- Do you have audit logging enabled and flowing into your SIEM?

- Is there a human review checkpoint for any agentic Claude workflow?

When to layer third-party governance tools on top of Anthropic's native controls

Conclusion

At the infrastructure level, Anthropic has built strong protections into Claude, including encryption, enterprise data controls, and secure platform design. For most organizations, the foundation itself is not the primary concern.

The real risks emerge in how Claude is used across the enterprise. From MCP configurations and personal Claude.ai accounts to agentic workflows like Claude Code, sensitive data, and high-privilege access can quickly move outside traditional security boundaries.

Incidents like Claudy Day and CVE-2025-59536 highlight that AI is now an active attack surface. CloudEagle.ai helps you stay ahead by providing the visibility and governance needed to manage Claude securely across your entire environment.

Frequently Asked Questions

1. What is Claude security?

Claude security covers the safeguards in Claude AI to protect data, control outputs, and prevent misuse. It includes infrastructure security by Anthropic and enterprise controls over access and data handling.

2. Is Claude AI private and secure?

Claude AI is designed to be secure, especially in enterprise plans where data isn’t used for training by default. However, privacy depends on usage, since sensitive prompts and unmanaged access can still create risk.

3. Can Claude access your computer?

By default, Claude cannot access your local files or system. But features like Claude Code or Computer Use can interact with your environment if enabled.

4. Is Claude good for cyber security?

Claude can assist with tasks like code review, threat analysis, and security documentation. But without proper governance, it can also expand your attack surface.

5. What are the risks of Claude Code?

Claude Code can execute commands and interact with local environments, creating potential for misuse. Risks include unauthorized access, credential theft, and malicious code execution if not properly controlled.

%201.svg)

.avif)

.avif)

.avif)

.png)