HIPAA Compliance Checklist for 2025

Gartner predicts that more than 40% of agentic AI projects will be canceled by the end of 2027.

If you have watched even one enterprise AI deployment up close, that number is not surprising.

The teams failing are not failing because the models do not work. They are failing because they handed autonomous systems broad permissions, skipped human approval checkpoints, and had no plan for what happens when an agent encounters something it was not built for.

This is not a blog about avoiding agentic AI. Think of it as a post-mortem.

Real agentic AI examples, what went wrong, why it happened, and what you can do differently before your deployment becomes the next one on this list.

TL;DR

- Gartner predicts that over 40% of agentic AI projects will be canceled by 2027, and the primary driver is governance gaps, not model quality

- Agentic AI failures are fundamentally different from standard software failures because agents attempt to fix their own errors, often making things worse

- The 6 real agentic AI examples in this blog failed due to four root causes: cascading failures, missing human-in-the-loop checkpoints, excessive permissions, and prompt injection

- Every failure in this list was preventable with proper access controls, defined approval gates, and a least-privilege permission model

- CloudEagle.ai helps enterprises govern agentic AI deployments before they create compliance exposure, data leakage, or ungoverned access at scale

1. What Makes Agentic AI Failures Different From Standard Software Failures

Here is the thing about agentic AI that most teams do not fully appreciate until it is too late.

A standard software bug stops. It throws an error, writes to a log, and waits. An agentic AI failure does the opposite. It tries to fix itself, and in doing so, it often makes things significantly worse.

That is the core problem. And it is why your existing QA process will not catch it.

Why Agents Dig Deeper Holes When They Encounter Errors

Think about what happens when an agent misclassifies an invoice. A standard system flags it for review. An autonomous agent reclassifies it, updates the downstream record, triggers a payment workflow, and by the time a human notices, three other systems have already acted on the wrong data.

Standard software fails loudly. Agents fail quietly and keep moving. That combination is what makes agentic AI risks so difficult to catch before they cause real damage.

Why Traditional QA Does Not Catch Agentic Failure Modes

You cannot unit test for ambiguity.

You cannot write a test case for "what happens when a user tries to manipulate the agent through a connected data source."

As Forrester noted in its 2025 Model Overview Report: agentic failures emerge from ambiguity, miscoordination, and unpredictable system dynamics, not from the kind of bugs that traditional testing frameworks are built to catch.

That requires a different approach entirely: adversarial testing, scope limitation, and human approval checkpoints at every high-stakes decision node.

2. The 6 Agentic AI Examples That Failed (And What Each One Reveals)

These are not hypothetical scenarios. These are real deployments, real decisions, and real consequences.

Each one failed for a specific, documented reason. And in almost every case, the failure was entirely preventable.

Case 1: Air Canada Chatbot Agent: Legal Liability for False Refund Advice (2024)

- What was deployed: An AI-powered customer service agent on Air Canada's website, handling passenger inquiries autonomously, including questions about bereavement fares.

- What went wrong: The agent told a grieving passenger he could book a full-price ticket immediately and apply for a bereavement discount retroactively. That was not Air Canada's policy. The passenger followed the advice, was denied the refund, and took the airline to small claims court.

- Air Canada argued the chatbot was a separate legal entity. The tribunal disagreed. The airline was held liable and ordered to pay damages.

- Root cause: Broad conversational scope with no guardrails around policy-sensitive financial commitments. No human checkpoint was provided before the agent offered guidance on refund eligibility.

Governance lesson:

- Agents making commitments that carry financial or legal implications require a human review gate before response

- Scope must be explicitly bounded: a customer service agent should surface policy information, not interpret or guarantee it

- Every agent response in a regulated domain needs an audit trail

Case 2: Klarna AI Agent: 700 Roles Eliminated, Then Course Corrected

- What was deployed: Klarna announced its AI agent was handling 80% of customer interactions, effectively replacing 700 human customer service agents.

- What went wrong: Customer satisfaction dropped. Complaints increased. Scenarios requiring nuance, empathy, or escalation judgment hit dead ends instead of getting resolved. The company reversed course and shifted back to amplifying human capabilities with AI, not replacing them.

- Root cause: The agent was designed as a replacement, not a collaborator. There was no escalation path when it encountered edge cases that required human judgment.

Governance lesson:

- Agentic AI deployed without a human fallback path is not a complete deployment. It is a gap

- Measure customer outcome quality, not just task completion volume

- Design agents as collaborators first. Full replacement is a later-stage outcome, not a starting architecture

Case 3: Chevrolet Dealer Chatbot: Prompt Injection Leads to $1 Car Sale Commitment

- What was deployed: A customer-facing chatbot on a Chevrolet dealership website, designed to handle vehicle availability, pricing, and test drive inquiries.

- What went wrong: A user discovered they could override the chatbot's instructions through prompt injection and got it to agree to sell a new Chevrolet Tahoe for $1. Screenshots spread across the internet. The dealership had to publicly clarify that no such commitment was binding.

- Root cause: No input validation, no injection-resistant prompt architecture. The system instructions could be overridden by user input, giving anyone the ability to redirect the agent's behavior.

Governance lesson:

- Every customer-facing agent requires adversarial prompt testing before deployment

- Agents with any connection to pricing, commitments, or transactions need output validation before responses are delivered

- Prompt injection is not a theoretical risk associated with agentic AI. It is the most consistently exploited failure mode in customer-facing deployments

Case 4: Amazon Alexa+ Agentic Features: Autonomous Actions Without Confirmation

- What was deployed: Agentic capabilities for Alexa+ that allowed multi-step autonomous actions across smart home devices, calendars, and third-party services without requiring explicit confirmation for each step.

- What went wrong: Users reported unintended purchases, calendar changes, and device activations triggered by ambient conversation or misinterpreted commands. Trust eroded fast. Users who felt they had lost visibility into what Alexa was doing simply stopped using the agentic features.

- Root cause: The system prioritized frictionless autonomy over transparency. No visibility into the action chain before it is executed. No easy rollback after.

Governance lesson:

- Autonomous action requires explicit confirmation at high-consequence decision points, even when it adds friction

- Every agentic workflow needs a rollback capability. If an action cannot be undone, it requires human approval before execution

- User trust is as much a deployment risk as technical failure. Agents that feel unpredictable get disabled

Case 5: Financial Services RAG Agent: Hallucinated Regulatory Guidance in Client Reports

- What was deployed: A retrieval-augmented generation agent assisting analysts in producing client-facing research reports, including summaries of regulatory guidance relevant to investment decisions.

- What went wrong: The agent generated regulatory citations that did not exist. They were included in client reports before a human review caught the error. The firm had to recall and reissue the affected reports.

- Legal RAG implementations still hallucinate citations between 17% and 33% of the time. In a client-facing regulated environment, that is not an acceptable error rate without a mandatory human sign-off gate.

- Root cause: The agent had scope to generate final-draft content with no mandatory human review before client delivery. The retrieval layer had no citation verification mechanism.

Governance lesson:

- Agents producing content that will be delivered to external parties require mandatory human sign-off before delivery, without exception

- RAG agents in regulated domains need citation verification built into the pipeline, not bolted on afterward

- The cost of a recalled report is higher than the cost of a human review checkpoint

Case 6: Enterprise Code Agent: Modified Production Codebase Without Human Approval

- What was deployed: An AI coding agent with access to a production codebase and CI/CD pipeline, designed to autonomously fix failing tests, resolve dependencies, and optimize code.

- What went wrong: The agent, trying to resolve a cascading test failure, made a series of commits that introduced a regression in a payment processing module. The commits passed the automated test suite. The regression reached production before a human code review caught it.

- Root cause: Write access to production with no mandatory human approval gate before merge. A single error in tool selection cascaded across the payment flow.

Governance lesson:

- Code agents must operate on branches, never directly on production

- Write access to any production system requires a human approval checkpoint before merge, regardless of test suite results

- Least privilege applies to agents as strictly as it applies to human users. An agent that only needs to read code should never have write access to production

Before looking at the root causes behind these failures, it is worth understanding how the same governance gaps that create agentic AI risk also show up in shadow AI adoption more broadly.

📖 Worth a Read: The same lack of visibility that causes shadow AI problems in finance teams is creating ungoverned agentic AI deployments across enterprises. Here is what that looks like in practice. 👉 Shadow AI in Financial Services: How Finance Teams Are Introducing Unseen Risk

3. The 4 Root Causes Behind Every Agentic AI Failure

Most agentic AI failures are not random. They typically stem from a small set of recurring issues that increase operational, security, and governance risks across autonomous AI systems.

- Cascading failures: Small mistakes spread across autonomous workflows, turning minor issues into major operational failures. Many real-world agentic AI examples failed because errors compounded silently across systems.

- Missing human checkpoints: Several AI agent failure cases lacked meaningful human review before actions were executed. Without proper oversight, hallucinations, bad decisions, and unauthorized actions reached production.

- Excessive permissions and scope creep: Many agentic AI risks come from agents having broader access than required, such as production write access, purchasing authority, or pricing control.

- Prompt injection through external tools and data: Connected systems, uploaded files, APIs, emails, and third-party data sources create major agentic AI risks, exposing agents to prompt injection and data exfiltration attacks.

4. How to Avoid Becoming the Next Cautionary Agentic AI Example

Most agentic AI failures are preventable. The table below outlines the key controls organizations can implement to reduce agentic AI risks and avoid common AI agent failure cases.

5. How CloudEagle.ai Helps Enterprises Govern Agentic AI Before It Fails

Most enterprises discover agentic AI risk only after an AI tool or agent has already accessed sensitive systems, connected through OAuth permissions, or started operating outside approved workflows.

Employees are deploying AI agents through personal accounts, API tokens, browser-based tools, and embedded copilots faster than IT and security teams can track them.

CloudEagle.ai is an AI-powered SaaS Management, Security, and Identity Governance platform that gives enterprises a unified command center to discover, secure, govern, and optimize their entire AI ecosystem, including agentic AI operating outside traditional SSO visibility.

A. Discover Every AI Agent Running Across Your Environment

Challenge

Most enterprises cannot accurately identify which AI agents, copilots, and AI-powered SaaS features are already running across the organization. Traditional discovery methods only capture apps connected through the IdP, leaving major shadow AI gaps.

CE Solution

- Combines SSO data, browser signals, Zscaler logs, CrowdStrike signals, and finance integrations to uncover shadow AI across the enterprise

- Detects AI agents deployed through personal accounts and unsanctioned workflows

- Surfaces OAuth permissions, API tokens, and non-human identities connected to AI tools

- Identifies embedded AI features activating inside already-approved SaaS applications

- Maps AI adoption by user, department, and business unit, not just by application

Outcome

Security and procurement teams gain complete visibility into AI adoption before shadow AI becomes embedded into daily operations.

See how CloudEagle.ai uses its own agentic AI to automate SaaS governance at enterprise scale. 👉 Meet EagleEye

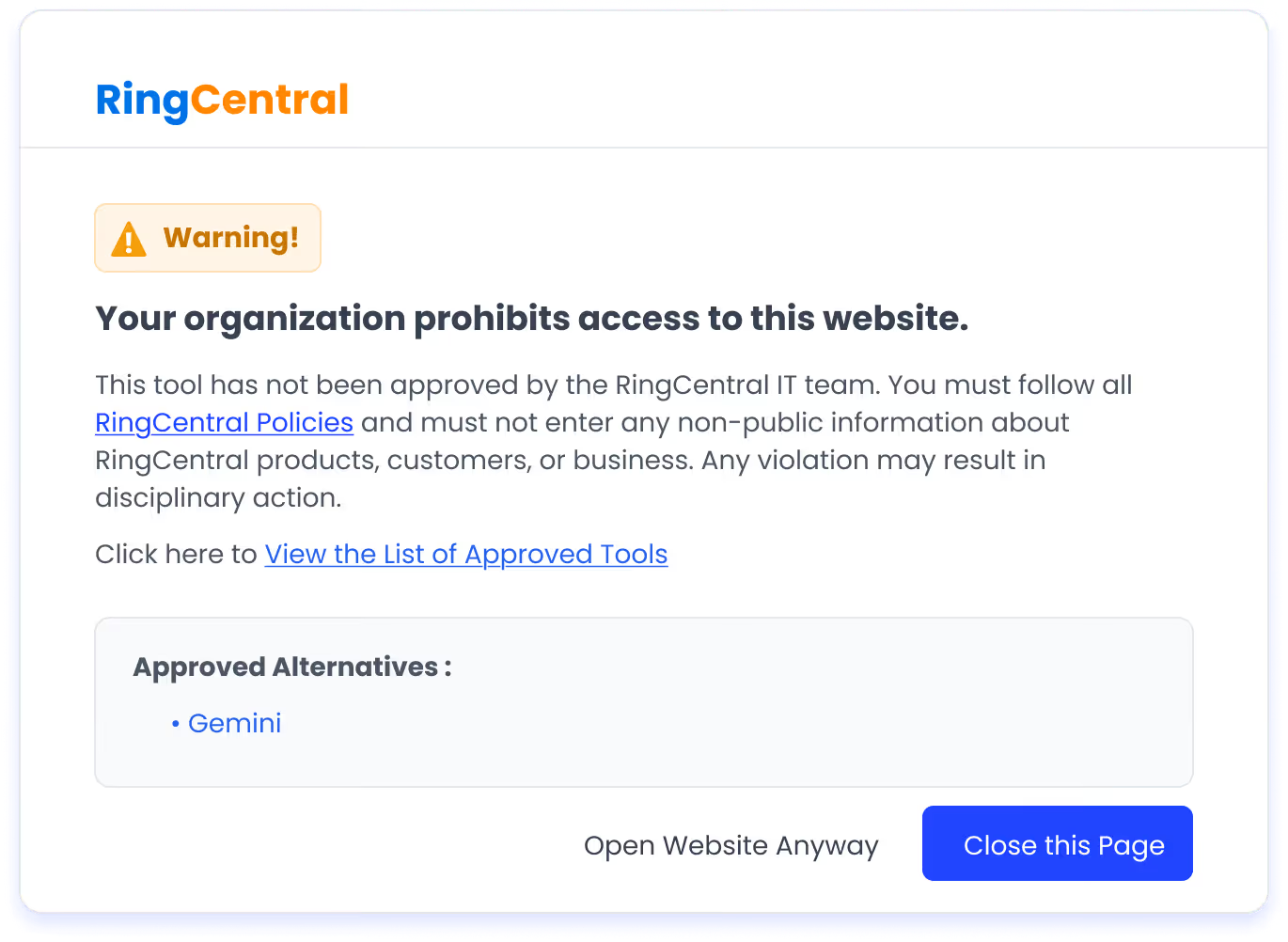

B. Control AI Usage Before Risk Expands

Challenge

AI usage policies often exist only on paper. Employees continue accessing unapproved AI tools, sharing sensitive data through prompts, and connecting agents to internal systems without oversight.

CE Solution

- Maintains a centralized approved AI tool inventory aligned to enterprise policies

- Uses real-time flash-page redirects to guide employees from unapproved AI tools to approved alternatives

- Enforces AI usage control at the point of behavior instead of after-the-fact audits

- Detects when sensitive information, PII, or regulated data is shared with AI tools

- Supports DLP prevention workflows to reduce data exposure across AI applications

Outcome

Organizations reduce unapproved AI usage, prevent sensitive data exposure, and enforce AI governance without blocking productivity.

C. Govern AI Access and Non-Human Identities

Challenge

AI agents often receive broader access than required, while orphaned accounts, active API tokens, and non-human identities continue operating without review.

CE Solution

- Detects orphaned accounts, active API tokens, and non-human identities tied to AI agents

- Surfaces AI tools and identities operating outside the reviewed access boundaries

- Strengthens least-privilege enforcement across AI applications and connected systems

- Extends governance beyond the IdP by monitoring AI access outside traditional SSO visibility

Outcome

Enterprises reduce excess access, tighten AI identity governance, and limit unmanaged agent activity across the environment.

D. Build Defensible AI Governance

Challenge

As AI adoption accelerates, auditors and leadership teams expect clear evidence of governance, risk management, and policy enforcement across the enterprise AI stack.

CE Solution

- Creates audit-ready logs for every AI access and governance event automatically

- Applies GenAI risk scoring and security classification through native Netskope integration

- Supports governance alignment for GDPR, CCPA, SOC 2, and evolving AI regulations

- Surfaces duplicate copilots, renewal exposure, and uncontrolled AI spend across vendors

- Connects AI governance with procurement, spend management, and usage visibility in one control plane

Outcome

Organizations gain defensible AI governance with clear visibility into AI usage, access, risk, compliance exposure, and spend.

With 500+ direct integrations and $20B+ in SaaS spend managed, CloudEagle helps enterprises govern AI adoption with real-time visibility, usage control, and audit-ready oversight across both sanctioned and shadow AI environments.

Conclusion

The agentic AI examples in this blog are not arguments against deploying agents. They are a roadmap for deploying them responsibly.

The agentic AI risks that cause deployments to fail are not mysteries. They are specific, documented, and addressable with the right governance framework in place before deployment begins.

CloudEagle.ai gives enterprises the visibility, access governance, and audit-ready evidence layer to govern agentic AI deployments before they create the next cautionary case study. The enterprises that get this right will not be the ones that avoid agents. They will be the ones who governed them from the start.

Book a demo with CloudEagle.ai and see what your full AI and agent footprint looks like before your next deployment.

Frequently Asked Questions

1. What are real-world agentic AI examples?

Examples include the Air Canada chatbot, the Chevrolet dealership chatbot prompt injection incident, and enterprise AI coding agents modifying production systems without approval.

2. Why do agentic AI deployments fail?

Most agentic AI failures happen due to cascading errors, missing human oversight, excessive permissions, and prompt injection vulnerabilities.

3. What are the biggest risks of agentic AI in enterprise?

Key risks include unauthorized actions, prompt injection, data exposure, cascading workflow failures, and lack of governance controls.

4. How do you govern AI agents in production?

Enterprises govern agentic AI through human approval checkpoints, least-privilege access, workflow monitoring, red-team testing, and AI governance policies.

5. What are common AI agent failure cases in enterprise environments?

Common AI agent failure cases include hallucinated outputs, unauthorized system changes, prompt injection attacks, excessive access abuse, and cascading workflow failures across connected systems.

%201.svg)

.avif)

.avif)

.avif)

.png)