HIPAA Compliance Checklist for 2025

Your finance team is not waiting for IT approval. They are already using AI.

23% of employees have shared financial statements or sales data with unauthorized AI tools, according to BlackFog's 2026 research. In financial services, that is not just a security problem. It is a regulatory one.

Shadow AI in financial services is happening right now, in your forecasting models, your reporting workflows, and your analysts' browser tabs. Here is what it looks like and what to do about it.

TL;DR

- Over 80% of workers use unapproved AI tools at work. In financial services, the data they share makes this far more dangerous

- Shadow AI in financial services creates exposure across SEC, FINRA, MiFID II, and Basel III requirements without anyone realizing it

- Finance teams adopt unmanaged AI tools faster than IT or compliance teams can respond

- Standard IT controls like SSO and DLP miss personal account AI usage entirely

- CloudEagle.ai discovers every AI tool running across your finance stack, including the ones nobody approved

1. Shadow AI in Financial Services Is Not a Future Problem. It Is Happening Right Now

Shadow AI is not a new concept. What is new is the data flowing through it.

When a finance analyst pastes a cash flow model into ChatGPT or uses an unapproved AI tool to summarize an earnings report, that data leaves your environment. It goes to a third-party server. It may be used to train a model. And you have no record of it.

80% of Employees Use Unapproved AI. In Finance, the Stakes Are Much Higher

Over 80% of workers globally use unapproved AI tools at work, per UpGuard's 2025 report. In financial services, the sensitivity of the data involved makes that number alarming.

Finance teams are not pasting holiday schedules into AI tools. They are pasting:

- Quarterly earnings data before public disclosure

- Client portfolio details and investment strategies

- Loan approval models and credit risk assessments

- Internal forecasts and M&A projections

A shadow AI data breach costs an average of $670,000 more than a standard breach, according to IBM. In financial services, add regulatory fines and audit liability on top of that.

Why Finance Teams Adopt Shadow AI Faster Than Governance Teams Can Respond

The answer is simple. AI makes their work faster, and nobody told them not to use it.

60% of employees say using unsanctioned AI tools is worth the security risk if it helps them meet deadlines, per BlackFog research. Among C-suite and senior leaders, that number jumps to 69%.

Finance leaders are not reckless. They are under pressure to move faster with leaner teams. The gap is not intended. It is awareness and governance.

2. What Shadow AI Actually Looks Like Inside a Finance Team?

It is rarely dramatic. It looks like productivity.

A financial analyst uses an AI summarizer to process a 200-page vendor contract. A treasury manager pastes FX exposure data into a public LLM to get faster scenario analysis. A reporting team uses an AI-embedded SaaS tool that finance adopted without telling IT or compliance.

None of these feels like a security incident. All of them are.

Unapproved AI in Finance Workflows

The most common shadow AI scenarios in finance teams:

Every one of these involves data that regulators care about. None are typically flagged by standard procurement or security reviews.

Sensitive Data in Public LLMs

This is the most common and most dangerous pattern.

An analyst gets a question from leadership that needs a fast answer. They paste the relevant financial data into ChatGPT or a similar tool and get a response in seconds. It works. They do it again.

What they do not realize is that the data may be retained, used for model training, or accessible to third parties, depending on the tool's terms of service. And there is no audit trail anywhere in your organization showing it happened.

AI Features in Unsanctioned SaaS

Finance teams subscribe to SaaS tools for budgeting, planning, or reporting. Somewhere in the last 12 months, those tools added AI features. The features are on by default. Nobody reviewed the updated data processing terms. Data is now flowing to an AI infrastructure that was never part of the original security review.

86% of organizations lack visibility into how data flows to and from AI tools, per the 2025 State of Shadow AI Report. Finance departments are one of the primary sources of that invisible flow.

Before diving into specific risks, it helps to understand how widespread this actually is and what other compliance teams are doing about it.

📖 Worth a Read: Shadow AI is hiding inside most enterprise environments, including yours. Here is how to find every AI tool your organization is running before it becomes a compliance problem. 👉 How to Discover Claude Licenses in Your Organization

3. The Specific Risks Shadow AI Creates in Financial Services

This is where generative AI compliance finance exposure gets real.

Third-Party Data Exposure

When financial data enters unapproved AI tools, you lose control of it.

You do not know where it is stored, how long it is retained, or who can access it. For pre-earnings data, client information, or strategic plans, this creates real insider trading and market manipulation risk.

Where orphaned identities make this worse:

When employees leave or change roles but retain access to AI tools, those accounts become orphaned identities.

These identities can still:

- Access sensitive financial data

- Interact with AI systems using historical permissions

- Operate outside active supervision

Regulatory Compliance Risk

AI risk in finance carries a regulatory dimension that most industries do not face:

Shadow AI creates ungoverned access to AI tools and data, bypassing supervision and policy controls.

None of these regulators accepts "we did not know employees were using that tool" as a defense.

Audit Gaps and Traceability

Auditors ask one question: how did you get this number?

If AI were used without approval or documentation, there is no audit trail. Outputs that cannot be explained or reproduced are increasingly flagged as governance failures.

Unvalidated AI Model Risk

Using AI for credit, risk, or investment decisions introduces unassessed model risk.

Unvalidated models can produce biased or inconsistent outputs. When those outputs drive financial decisions, the liability sits with the organization, not the vendor.

4. Why Standard IT Controls Do Not Catch Shadow AI in Finance?

Most IT security stacks were not built for this problem.

AI Features That Slip Through Reviews

When a SaaS vendor adds an AI feature to an existing product, it does not trigger a new procurement review. The tool is already approved. The new AI capability just appears in the interface.

IT has no visibility. Security has no alert. Compliance has no record. And data is flowing to an AI infrastructure that was never assessed.

Why SSO and DLP Miss It

SSO controls access to corporate systems, not what employees do outside them.

DLP monitors known data channels, not data copied into a personal browser and pasted into public AI tools. That is where most shadow AI activity happens.

In fact, 47% of GenAI users access these tools through personal, unmonitored accounts, making them largely invisible to IT and security teams.

What IT Sees vs What Finance Does

Only 12% of companies can detect all shadow AI usage.

The CISO sees a clean, approved stack. On paper, everything looks under control.

But finance teams are often working in parallel, using AI tools that never went through IT, security, or compliance.

That disconnect creates a blind spot. And that blind spot is exactly where shadow AI risks build up.

5. How to Govern Shadow AI in Financial Services Without Blocking Innovation?

The goal is not to ban AI. The goal is to see it, assess it, and control it.

1. Build a Complete AI Tool Inventory Across Every Finance Function

Start with discovery. You cannot govern what you cannot see.

- Survey finance teams on what AI tools they use daily

- Scan SSO logs, expense reports, and browser activity for AI tool usage

- Pull financial data for Anthropic, OpenAI, and other AI vendor charges

- Identify AI features embedded in existing approved SaaS tools

2. Classify AI Tools by Data Sensitivity and Regulatory Risk

Not all shadow AI is equal:

3. Create an Approved AI Tool List With Sanctioned Alternatives

Finance teams use shadow AI because they do not have approved alternatives that work as well. Give them options. Build a list of approved AI tools for common finance tasks and communicate it clearly.

4. Deploy Continuous Monitoring for Data Flowing to External AI Platforms

Point-in-time audits are not enough. Shadow AI in financial services usage grows between reviews. Deploy monitoring that surfaces new AI tool usage as it happens, not six months later.

5. Define an AI Acceptable Use Policy That Finance Leadership Signs Off On

A policy that IT wrote and nobody in finance has read is not a control. The CFO and finance directors need to own this, communicate it to their teams, and be accountable for enforcement.

6. Train Finance Teams on What Data Must Never Enter Any AI Tool

Make it specific. Tell them exactly what data categories are off limits: pre-earnings information, client details, credit models, M&A projections. Give them real examples. Generic training does not change behavior. Specific guidance does.

The regulatory expectations around AI in financial services are evolving fast. This conversation is worth your time if you are building a governance program right now.

🎙️ Podcast: Optimizing Shadow IT Risks and Innovations in SaaS Management. Real talk on how enterprises are discovering and governing unsanctioned AI before it becomes a regulatory problem. 👉 Listen now

6. How CloudEagle.ai Helps Financial Services Teams Discover and Govern Shadow AI?

Shadow AI governance starts with visibility. CloudEagle.ai provides it.

CloudEagle.ai is an AI-powered SaaS Management, Security, and Identity Governance platform that maps your complete AI footprint across SSO, browser signals, and financial data, surfacing shadow AI and AI tool sprawl before your next examiner does.

Discover Shadow AI Across the Organization

CloudEagle automatically discovers every AI tool in use, including the ones IT never approved.

It correlates signals across:

- browser activity

- Zscaler and CrowdStrike

- SSO systems

- finance and expense data

This surfaces:

- unapproved AI tools

- AI features inside SaaS applications

- personal accounts used for work

So you get a complete view of AI usage across the organization, not just what was formally approved.

See Who Is Using AI and Where

CloudEagle gives clear visibility into:

- Which teams are using AI tools

- Which users are accessing them

- How adoption is spreading across the organization

This is important because shadow AI risk is tied to who is using the tool and what workflows it touches, not just the tool itself.

Identify Risky AI Usage

CloudEagle helps you focus on the AI tools that matter.

- Identify unapproved AI tools in active use

- Highlight high-risk applications

- Detect where sensitive data may be exposed

This allows teams to move from a list of tools to clear risk visibility.

Enforce AI Usage Policies in Real Time

CloudEagle enables real-time AI usage control.

- Maintain a centralized list of approved AI tools

- Enforce policies at the point of access

- Monitor usage continuously across teams

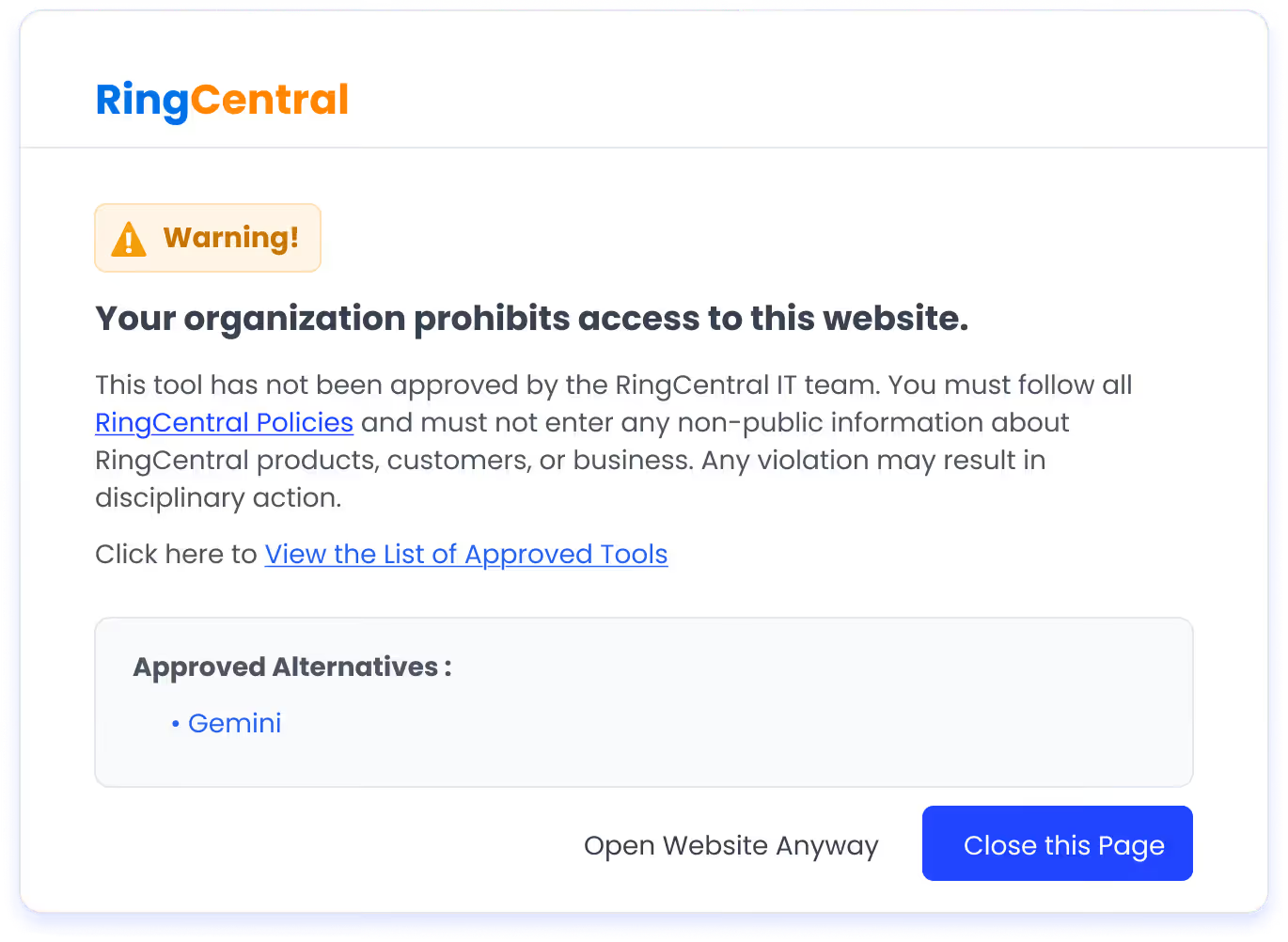

Redirect Unapproved AI Usage with Flash Pages

When an employee tries to access an unapproved AI tool:

- A real-time flash page is triggered

- The user is informed of the policy

- They are redirected to an approved tool

This helps control shadow AI without blocking productivity.

Continuously Monitor and Stay Audit-Ready

CloudEagle continuously tracks AI usage across the organization.

- New shadow AI tools are detected immediately

- Usage is monitored over time

- Every interaction is logged and audit-ready

This ensures teams can demonstrate control, not just visibility.

Final Thoughts

Shadow AI in financial services is not a hypothetical risk. It is running inside your organization right now, touching data that regulators care about and leaving no audit trail.

The good news is that governing generative AI compliance finance does not mean blocking AI entirely. It means building visibility, creating fast-track approval processes, and giving finance teams sanctioned alternatives.

CloudEagle.ai gives financial services teams the discovery, classification, and continuous monitoring layer needed to govern AI tool sprawl usage before it becomes a regulatory finding.

If you are ready to see exactly what AI is running inside your finance function, book a demo and find out before your next examiner does.

Frequently Asked Questions

1. What is the best AI for financial services?

The best AI for financial services depends on use cases like AI risk in finance, fraud detection, and generative AI compliance in finance, rather than a single tool.

2. Which finance jobs will survive AI?

Roles focused on AI risk in finance, compliance, and client relationships will survive, especially in environments dealing with unmanaged AI in financial services.

3. What is the 30% rule in AI?

The 30% rule suggests AI automation in finance can handle about 30% of tasks, increasing exposure to shadow AI in financial services if not governed.

4. What are the 4 types of AI?

Reactive machines, limited memory, theory of mind, and self-aware AI all play a role in shaping generative AI compliance in finance.

5. What are the 7 types of risk in banking?

Key risks include credit, market, operational, liquidity, compliance, reputational, and strategic risk, all amplified by AI risk in finance and shadow AI.

6. What is the impact of AI on finance?

AI drives automation and efficiency, but also introduces shadow AI, unmanaged AI in financial services, and the need for strong shadow AI governance.

%201.svg)

.avif)

.avif)

.avif)

.png)