HIPAA Compliance Checklist for 2025

AI has entered the enterprise. And nobody asked the CISO's permission.

Tools got embedded into existing SaaS products. Engineers started using AI to write code. Finance teams started using it to draft reports.

Most of it happened faster than any governance policy could keep up with.

That's the environment CISOs are operating in now. The question isn't whether AI changes the role. It's whether the CISO steps into that change intentionally or gets defined by it reactively.

TL;DR

- AI has added an entirely new governance mandate on top of an already stretched CISO workload: 96% of security leaders have been handed AI oversight with no additional resources.

- The biggest risks are internal. Two-thirds of CISOs experienced material data loss last year, with employees and unmanaged AI tools topping the causes.

- Shadow AI is the gap most organizations are losing. 71% of companies have AI tools touching core systems. Only 16% govern that access effectively.

- The Samsung case is the clearest warning: when employees use AI without guardrails, proprietary data gets exposed, even when no one meant any harm.

- The EU AI Act means AI governance is no longer just a security issue. It's a compliance issue with fines up to 4% of global revenue.

- CISOs who govern AI proactively earn a strategic seat at the table. Those who stay reactive spend their time cleaning up incidents that other teams created.

1. The Job Description Nobody Updated

Ask a CISO what their core responsibilities were five years ago. You'll hear the same things: protect the perimeter, manage incidents, keep the board informed, stay compliant.

That job hasn't gone away. But a second job has been stacked on top of it.

According to Splunk's 2026 CISO Report, which surveyed 650 CISOs globally, 96% have now been assigned to manage their organization's AI governance and risk management, on top of existing mandates, with no corresponding increase in headcount or budget.

Proofpoint's 2025 Voice of the CISO report documented record burnout, citing exactly this: the AI mandate arriving without the resources to match it.

Vendors embedded AI features into existing tools. Employees adopted generative AI on their own. Engineering teams ran experiments without waiting for sign-off.

Together, they created systems acting on behalf of the organization, without the structures to govern what those systems were doing. That's what CISOs inherited. And most organizations still haven't built the infrastructure to manage it.

2. What Happens Without Governance: The Samsung Case

Before getting into what CISOs should do, it's worth understanding what happens when governance isn't there.

Case in point: Samsung's ChatGPT Incident (2023)

In early 2023, Samsung allowed engineers at its semiconductor division to use ChatGPT. Within 20 days, three separate data leaks had occurred:

- An engineer pasted proprietary source code into ChatGPT to fix a bug

- Another employee entered the confidential code for optimizing chip defect detection

- A third transcribed an internal meeting recording and fed it into ChatGPT to generate minutes

None of those employees was acting maliciously. They were trying to work faster.

But because ChatGPT retains user input to train its models, Samsung's trade secrets were now in OpenAI's systems with no way to retrieve them. The root cause was the same every time: employees had a powerful AI tool with no training, no acceptable use policy, and no data classification guidelines.

When employees adopt AI without guardrails, the organization pays a price it didn't budget for. That gap is the CISO's responsibility to close.

3. The Real Scale of the Problem

The Samsung incident isn't an outlier. It's a preview of what happens at scale.

The 2026 CISO AI Risk Report by Cybersecurity Insiders and Saviynt, based on more than 200 CISOs and senior security leaders, found that 71% of organizations have AI tools with access to core systems, including Salesforce, SAP, and similar platforms. Only 16% say that access is governed effectively.

The same survey found:

- 83% of security leaders are concerned about AI access

- 47% have already observed agentic AI exhibit unintended or unauthorized behavior

- 1 in 3 organizations dealt with a security incident or near-miss involving AI in the past year

- Only 5% feel prepared to contain a compromised AI agent

Proofpoint's 2025 Voice of the CISO report, drawing on 1,600 CISOs across 16 countries, found that two-thirds experienced material data loss in the past year, up from 46% in 2024. In 92% of those cases, departing employees played a role.

Shadow AI compounds this further.

According to a 2026 Netskope report, 47% of generative AI platform users access those tools through personal accounts that their companies aren't overseeing. Nearly half of enterprise AI activity is happening entirely outside the CISO's visibility.

The gap between awareness and actual governance is significant. CISOs know the risks. Most organizations just haven't built the infrastructure to close them.

4. CISO Responsibilities in an AI-First Enterprise: What You Must Own

A. Why CISOs Must Lead AI Governance:

If the CISO doesn't build the AI governance framework, someone else will. Legal will. Procurement will. Department heads will each have different risk tolerances and no security visibility. That's already happening. Shadow AI is the direct result.

Governance means something concrete here. It means defining:

- Who approves AI tools before they're deployed

- What data classifications can flow into which systems

- How vendor-embedded AI features get evaluated before procurement

- How usage gets monitored after rollout, not just at onboarding

The NIST AI RMF and ISO 42001 provide the workable foundations.

A cross-functional committee with named owners from legal, compliance, IT, and business units is what makes those frameworks operational rather than theoretical.

Who owns this is genuinely contested.

Acuvity's 2025 research found CIOs control AI security decisions in 29% of organizations; CISOs rank fourth at 14.5%. That gap exists because no one claimed the mandate early.

CISOs need to be in the AI strategy conversation before decisions get made, not brought in afterward to audit them. According to Heidrick & Struggles' 2025 CISO survey, the share of CISOs reporting directly to the CEO has tripled in one year. The window to position for that is now.

Pro tip: Map every AI governance decision to a named owner. The biggest failure mode isn't a wrong decision; it's one that never gets made because no one's accountable.

B. How to Govern Shadow AI in the Enterprise

Shadow IT meant unauthorized software: the risk was mostly operational. Shadow AI means employees using systems that process, retain, and potentially train on sensitive organizational data, with no IT or security record of it.

There are two sides.

The obvious: employees accessing free AI tools through personal accounts. 68% do, per Menlo Security 2025, and that data may be training a public model used by competitors.

The hidden: AI features embedded inside sanctioned tools.

Take Microsoft 365 Copilot: AI now sits across Word, Teams, Outlook, and SharePoint, often without employees realizing it's analyzing their data. IBM's 2025 Cost of a Data Breach report put shadow AI incidents at an average of $650,000 each.

Pro tip: Categorize every AI tool in use into three buckets: Approved, Limited-Use, and Prohibited. Give employees a clear path to request access. Make the official option easy, and most people will use it.

C. Non-Human Identity Governance for AI Agents and Agentic Systems

Service accounts, API keys, OAuth tokens, and agentic systems – all interact with enterprise systems on permissions nobody reviewed since initial setup.

The 2026 CISO survey found that most AI tools were never provisioned, certified, or deprovisioned through structured workflows. They just got access. And kept it.

Apply the same lifecycle controls to AI identities as to employees. Automate the full joiner-mover-leaver process across every app: provisioning tied to specific use cases, regular access reviews, automatic deprovisioning when the use case ends.

A compromised agentic AI with permanent admin rights is extremely difficult to contain.

D. Translating AI Risk Into Business Language, On a Schedule, Not in a Crisis

The CISO's credibility with the board depends on one thing: showing up with context before the incident.

Proofpoint's 2025 Voice of the CISO report found 66% of CISOs would consider paying a ransom to prevent data leaks. That's what happens when risk isn't communicated upstream early enough.

Make AI risk a recurring board agenda item. Lead with financial framing: "We have AI tools touching 71% of core systems. 84% of that access is ungoverned. Here's what a breach costs versus what governance costs now."

At Turner Construction, the CFO and CISO meet twice a month to review risks and board readiness. That rhythm is how "no budget" becomes "show me the business case."

E. Securing the Model, Not Just the Infrastructure Around It

Most enterprise security programs were built to protect infrastructure.

AI introduces risk inside the application layer that most programs weren't built for: prompt injection, data poisoning, and model inversion, all documented in MITRE ATLAS.

CISOs don't need to become ML engineers.

Get four questions in every vendor evaluation:

- How is the model trained, and what data does it retain?

- What are the prompt guardrails?

- Is there an IR process for model failures?

- What security certifications does the AI component hold?

- Own AI red teaming as a recurring practice, not a one-off.

On the defensive side, the 2025 CSO Security Priorities Study found 99% of security leaders have already seen benefits from AI-enabled tools. Use them.

AI handles volume and pattern recognition: threat detection, alert triage, anomaly detection. Humans handle judgment.

For a breakdown of leading platforms, see our top AI security platforms roundup.

5. CISO Guide to EU AI Act Compliance and Regulatory Obligations

CISOs operating in or selling into the EU now have a binding legal obligation on top of NIST and ISO: the EU AI Act.

What the EU AI Act requires:

- Classify AI systems by risk level (unacceptable, high, limited, minimal)

- Maintain documentation of how high-risk AI systems operate and make decisions

- Implement human oversight mechanisms for high-risk deployments

- Report incidents involving high-risk AI systems to regulators

The CISO's job: ensure deployments are classified correctly, documentation exists before regulators ask, and vendor contracts exclude your data from model training.

For the full compliance picture, see our top compliance standards guide and SaaS compliance risk guide.

6. Actionable Checklist: Building an AI Risk Management Program

Here's what a functioning AI governance framework looks like in practice.

A. Build a Live AI Inventory:

You can't govern what you can't see. Map every AI tool in use, including tools employees adopted without formal approval. Classify each one: Approved, Limited-Use, or Prohibited.

B. Define Data Classification Rules for AI Inputs:

Samsung's engineers had no policy telling them what they couldn't share. That AI acceptable use policy needs to exist before the next employee opens a new tool.

C. Apply Identity Lifecycle Controls to AI Agents:

Provision, certify, and deprovision AI identities the same way you do human users. Apply machine identity governance to service accounts, API keys, and OAuth grants. See how automated JML workflows handle this across both SSO and non-SSO apps.

D. Establish a Cross-Functional AI Governance Committee:

Bring together Legal, Privacy, IT, and business owners. Define who approves AI tools, who owns which governance decisions, and the cadence for reviewing the evolving landscape.

E. Add AI Threat Scenarios to Incident Response Plans:

Standard IR playbooks weren't built for compromised agentic AI or prompt injection events. Build those scenarios in now. Red-team production AI at least annually.

F. Prepare for EU AI Act and Regulatory Obligations:

Know which AI deployments fall under high-risk classifications. Ensure documentation, human oversight mechanisms, and vendor data agreements are in place before regulators ask for them.

G. Set Up Regular AI Risk Reviews With CFO and Board:

Governance investment requires consistent communication, not crisis-mode asks. Use financial language: cost of a breach scenario versus cost of preventive controls.

H. Train Employees on AI Usage Policy:

Human error drove 92% of data loss incidents in Proofpoint's 2025 survey. An AI acceptable use policy paired with regular training is the cheapest control available.

7. How CloudEagle Supports AI-Era CISO Oversight

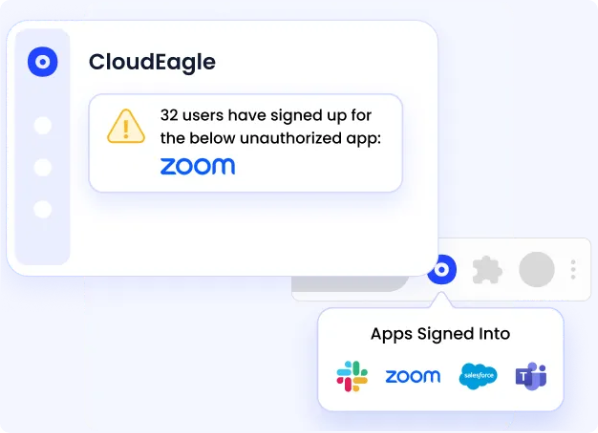

Governing AI in a large enterprise requires visibility that most security teams don't currently have. CloudEagle.ai gives CISOs the operational layer to see what's actually happening across their SaaS and AI tool stack, and act on it.

A. Surface Shadow AI Before It Becomes a Liability

CloudEagle.ai tracks AI-enabled applications across the organization, including tools employees adopted without formal approval.

CISOs can assess risk before it compounds and walk into CFO conversations with real data instead of estimates.

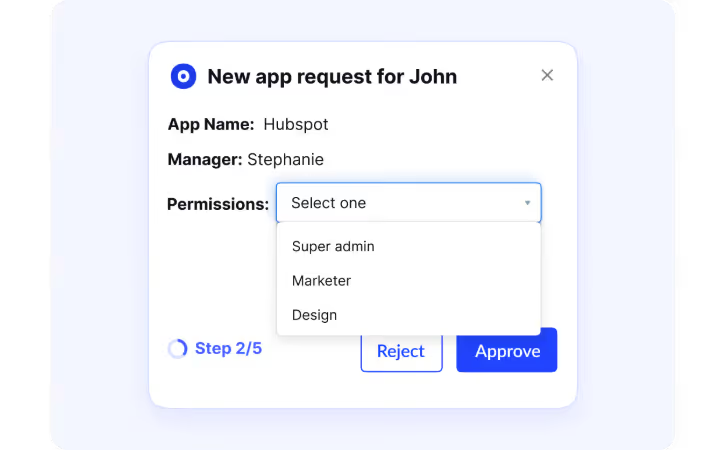

B. Govern Access by Role, Not by Historical Assignment

CloudEagle.ai maps SaaS and AI access by role, flags overprivileged accounts, and supports just-in-time provisioning for sensitive systems.

The result: less standing access, fewer dormant permissions, and a clean audit trail for compliance reporting.

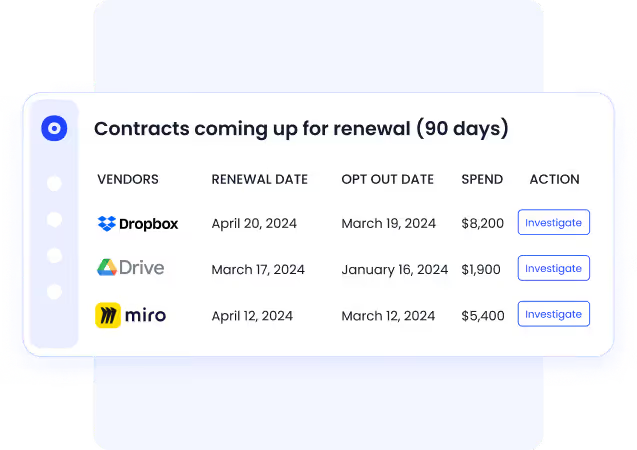

C. No More Renewal Surprises

CFOs hate late-stage renewal requests. CloudEagle prevents that with 30/60/90-day renewal alerts delivered via Slack or Teams, for every contract in the stack.

You come in with usage data to justify cutting, renegotiating, or renewing, instead of scrambling at the deadline.

D. Connect Spend, Access, and Risk in One Place

When CISOs need to justify AI governance investment to the CFO, CloudEagle provides the data to do it:

- Spend by tool, with active versus inactive usage breakdowns

- Access visibility that connects permissions to actual risk exposure

- Renewal workflows that surface surprises 90 days early

- Automated deprovisioning that eliminates license waste and access risk in one step

In an environment where 92% of data loss incidents involve departing employees, removing access automatically at offboarding isn't optional. It's a direct risk reduction measure.

8. The Strategic Opportunity Inside the Mandate

The CISO who walks into board conversations with a clear governance framework, a live AI inventory, and financial-language risk analysis isn't just managing downside.

They're earning a permanent seat at the table, and with CloudEagle, they have the data on spend, access, and renewals to back it up.

9. FAQs

1. Should the CISO or CIO own AI security?

Neither alone. Acuvity's 2025 research found CIOs lead in 29% of organizations, CISOs fourth at 14.5%. In practice: CISOs own security guardrails, legal owns compliance, nd business owners own usage decisions.

2. What are the 7 key components of cybersecurity?

The seven core components are network security, endpoint security, application security, identity and access management, data security, cloud security, and incident response.

In an AI-first enterprise, each of these now has an AI-specific dimension: governing model access, securing training data, and detecting AI-augmented threats require layering AI governance controls across all seven.

3. What are the main responsibilities of a CISO?

A CISO owns the organization's security strategy, risk management program, compliance posture, and incident response capability.

In 2026, that mandate has expanded to include AI governance: evaluating AI tools before deployment, governing how organizational data flows into AI systems, managing non-human identities, and translating AI risk into financial terms for board and CFO conversations.

4. Is CISO or CTO higher?

It depends on the organization. CTOs typically sit at the executive leadership level with direct P&L influence; CISOs have historically reported to the CIO or CTO. That's shifting.

Heidrick & Struggles' 2025 survey found the share of CISOs reporting directly to the CEO tripled in one year, reflecting how seriously boards are taking security and AI governance.

5. What are the 5 C’s in security?

The 5 C's are Change, Compliance, Cost, Continuity, and Coverage.

Together, they give security leaders a framework for evaluating program maturity: managing change without introducing new risk, meeting regulatory requirements, justifying security spend, maintaining operations through disruption, and ensuring no part of the environment falls outside the security program's visibility, including AI tools and non-human identities.

.avif)

%201.svg)

.avif)

.avif)

.avif)

.png)