HIPAA Compliance Checklist for 2025

Your security team just got asked to review the company's AI risk posture.

Sounds straightforward.

The list of AI tools your employees actually use doesn't exist. The copilot your sales team adopted three months ago wasn't approved by IT.

The model summarizing contracts pulls from a document store that wasn't scoped for that use case. And the AI agent someone spun up to automate procurement workflows? It's been triggering actions without human review for six weeks.

Nobody did anything wrong. The tools are genuinely useful. But nobody built guardrails either.

This is where most enterprise AI security conversations start in 2026, with a slow realization that AI has moved faster than the controls around it.

IBM's 2024 Cost of a Data Breach Report puts the average breach cost at $4.88 million, with security teams increasingly linking exposure to AI-driven workflows that were never built to enforce boundaries.

The problem with traditional security tools is that they were built for systems.

They don't inspect what a model does at inference time. They don't track what a copilot surfaces or what an agent executes. They don't govern who can prompt what, or flag when outputs cross a policy boundary.

That's what AI security platforms are built to do, and in 2026, the category has matured enough that the differences between platforms are meaningful.

This article breaks down what AI security actually requires, the four capabilities every platform needs to cover, and the ten tools worth evaluating this year.

TL;DR

What Is an AI Security Platform?

An AI Security Platform (AISP) is a centralized system that secures AI across its lifecycle, from data and models to prompts, agents, and outputs. It applies policies and guardrails to AI workflows to manage AI-native risks such as prompt injection, data leakage, unsafe agent actions, and model tampering.

AISPs protect both third-party AI services and internally built applications through continuous monitoring and enforcement.

Key aspects

- Unified control plane across AI data, models, APIs, and apps

- AI-native protections for prompts, agents, and outputs

- Broad coverage for external tools and custom AI

- Data security and governance with real-time visibility

Why AISPs are needed: As generative AI becomes embedded in core workflows, traditional security tools lack visibility into model behavior. AI Security Platforms extend security and governance across the full AI lifecycle, enabling safer adoption without slowing innovation.

Why Traditional Security Tools Fall Short for AI?

AI systems behave differently from traditional applications. They reason over data, produce variable outputs, and increasingly act autonomously. This breaks core assumptions behind legacy security tools built for static code, fixed logic, and predictable execution.

AI Applications Operate on a Different Model

AI development environments now connect directly to production data, credentials, and APIs. Training notebooks, MLOps platforms, and experimentation pipelines often hold highly sensitive information.

Many of these tools sit outside standard CI/CD flows, leaving gaps in AppSec and cloud security coverage.

Behavior Is Dynamic, Not Deterministic

AI does not behave the same way on every request. The same prompt can yield different outputs, and agents may take actions based on context rather than rules.

Static checks and traditional firewalls cannot reliably predict or constrain this behavior.

The AI Attack Surface Is Broader

Risk spans models, data, prompts, and infrastructure. External models and datasets introduce supply chain exposure. Training data can be poisoned.

Prompts can be manipulated. Retrieval pipelines can be influenced. Misconfigured notebooks and excessive permissions remain common.

Legacy Tools Lack AI Context

Most security tools do not understand prompts, inference logic, or model behavior. They inspect requests and code, not intent or outcomes.

This makes it difficult to distinguish normal AI usage from real risk.

Governance and Compliance Add Pressure

Regulations now require visibility and evidence across how AI systems are trained and used.

Traditional tools rarely show how decisions were made or whether controls were enforced at inference time.

Why this matters: As AI becomes part of core workflows, security must cover models, prompts, data, and behavior together. Without that context, traditional tools leave critical blind spots.

Top AI Security Platforms in 2026 (By Category)

AI Governance & Oversight

1. CloudEagle.ai: SaaS, AI, and Identity Governance Platform

CloudEagle.ai is a SaaS management, identity governance, SaaS security, and AI governance platform built to give organizations continuous visibility and control across their SaaS ecosystem.

It helps teams discover applications, monitor access, enforce least-privilege policies, and stay audit-ready without relying on spreadsheets, point-in-time reviews, or manual evidence collection.

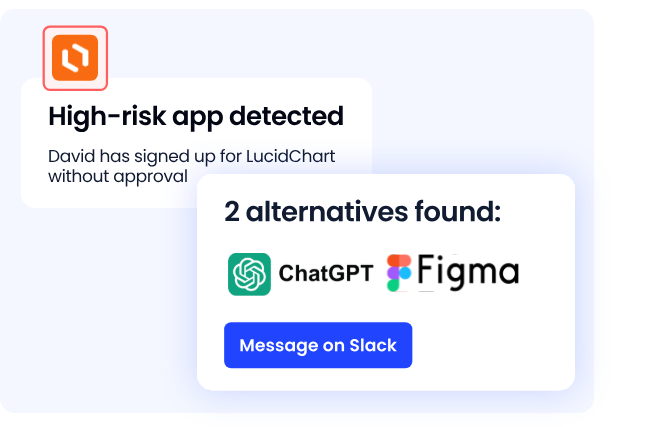

As AI adoption accelerates, CloudEagle.ai extends this foundation to AI governance and security. The platform detects shadow AI, tracks how AI tools are accessed and used, and reduces identity and data risk introduced by AI-driven applications.

Instead of treating AI as a separate problem, CloudEagle.ai governs AI as part of the broader SaaS, identity, and access landscape.

Key Features:

- Shadow AI and SaaS sprawl: Teams often don’t know which AI tools employees are using → CloudEagle.ai automatically discovers SaaS and AI apps using SSO, finance data, browser signals, and security logs, giving a complete inventory through SaaSMap.

- Uncontrolled AI usage: Employees adopt AI tools faster than policies can keep up → CloudEagle.ai enforces approved AI and SaaS usage, flags unapproved tools, and guides users toward safe, sanctioned options without blocking productivity.

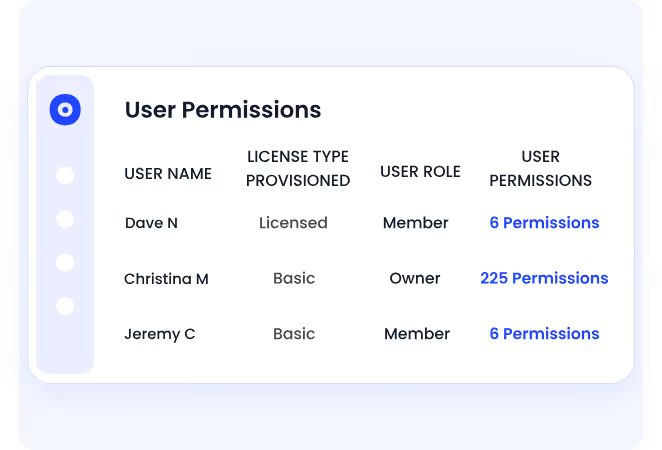

- Excessive and privileged access: AI amplifies the risk of over-permissioned identities → CloudEagle.ai continuously highlights privileged, risky, or misaligned access and supports guided cleanup workflows.

- Manual and unreliable access reviews: Quarterly reviews miss real risk → CloudEagle.ai automates access reviews with centralized visibility, manager-driven certifications, and instant flagging of high-risk or ex-employee accounts.

- Audit fatigue and compliance gaps: Evidence collection is slow and reactive → CloudEagle.ai captures audit evidence continuously and generates SOC 2 and access-control reports without manual effort.

- Data security and trust: Sensitive data exposure raises regulatory risk → CloudEagle.ai operates with no data retention, strong encryption, restricted support access, SSO authentication, and full audit trails, backed by GDPR, SOC 2 Type II, and ISO 27001 compliance.

Pros

- Unified visibility across SaaS and AI usage

- Strong focus on identity, access, and governance risk

- Continuous compliance instead of point-in-time audits

- Enterprise-ready security posture with recognized certifications

- Low-friction deployment without agents

Use Cases:

- Governing enterprise AI adoption and preventing shadow AI

- Reducing identity and privileged access risk tied to AI-driven apps

- Automating SaaS access reviews for SOC 2 and internal audits

- Maintaining a real-time inventory of SaaS and AI tools

- Strengthening SaaS security posture without slowing teams down

CloudEagle.ai is best suited for organizations that want to govern AI and SaaS usage at scale, control access and risk, and stay audit-ready as AI adoption accelerates.

2. Reco: Dynamic SaaS and AI Security Platform

Reco is a SaaS and AI security platform focused on dynamic risk detection across applications, identities, and configurations.

It helps security teams close visibility gaps caused by SaaS sprawl, shadow AI, and over-permissioned users through continuous monitoring and analytics.

Key features

- Full visibility into SaaS, GenAI tools, shadow apps, and AI agents

- Continuous posture management for SaaS configurations and permissions

- Detection of risky users, over-privileged access, and compromised accounts

- AI-driven analytics using a knowledge graph to correlate behavior and exposure

- Continuous compliance monitoring aligned with industry standards

Pros

- Strong visibility across large, multi-SaaS environments

- Easy deployment with an intuitive dashboard

- High-quality alerts that integrate well with SIEM and SOC workflows

Cons

- Remediation capabilities are more limited compared to detection

- May require tuning to reduce alert noise in complex environments

- Less effective for highly niche or custom SaaS applications

Use case: Reco is well-suited for organizations struggling with SaaS sprawl, shadow AI usage, and excessive permissions, especially where security teams need continuous insight into SaaS posture, risky behavior, and compliance gaps across a growing application ecosystem.

3. Cranium: AI Security and Governance Platform

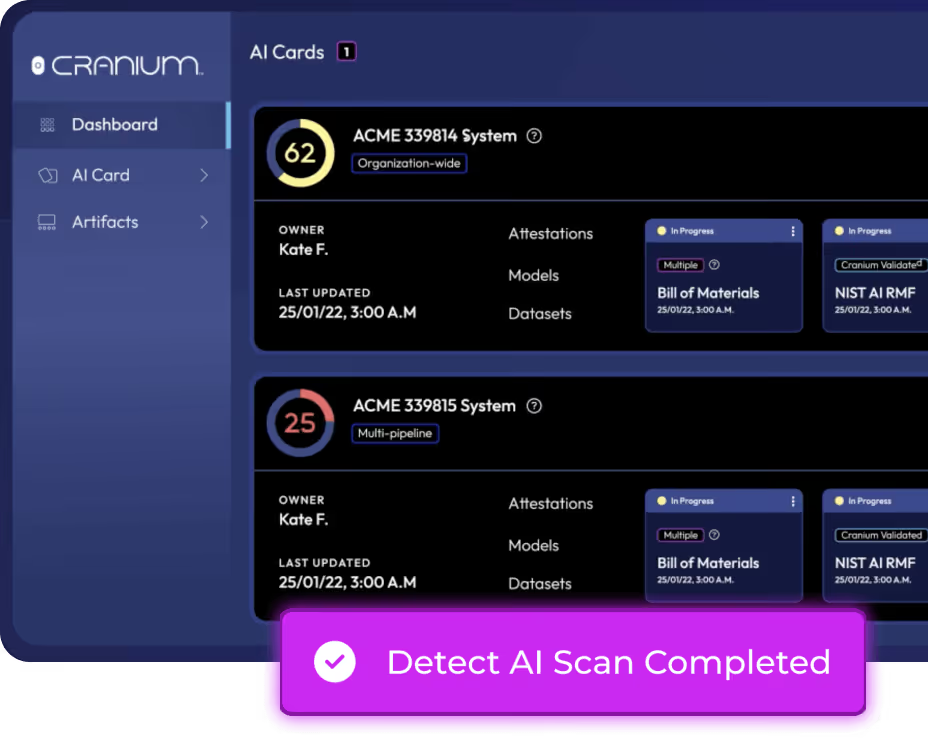

Cranium is an enterprise AI security and governance platform that helps organizations discover, monitor, and govern AI systems across the full AI supply chain. It combines AI security, compliance, and third-party risk management in a single system of record.

Key features

- Enterprise-wide discovery and inventory of AI models, data, infrastructure, and vendors

- Continuous monitoring for AI vulnerabilities, threats, and misconfigurations

- Model testing and threat simulation to identify weaknesses before and after deployment

- Built-in compliance validation and audit-ready reporting for AI governance

- Third-party AI risk visibility across external models and services

Pros

- Strong focus on end-to-end AI governance and lifecycle coverage

- Designed for large, complex enterprise AI environments

- Recognized by industry analysts and cybersecurity organizations

Cons

- Heavier governance focus may be more than early-stage teams need

- Less emphasis on real-time prompt-level or inference-time controls

- Implementation may require dedicated ownership across security and compliance teams

Use case: Cranium is well-suited for enterprises with multiple AI models, vendors, and embedded AI systems that need a centralized platform to manage AI security posture, validate compliance, and govern AI risk across internal and third-party AI supply chains.

LLM Runtime & Prompt Security

4. Lakera Guard: LLM Runtime and Prompt Security Platform

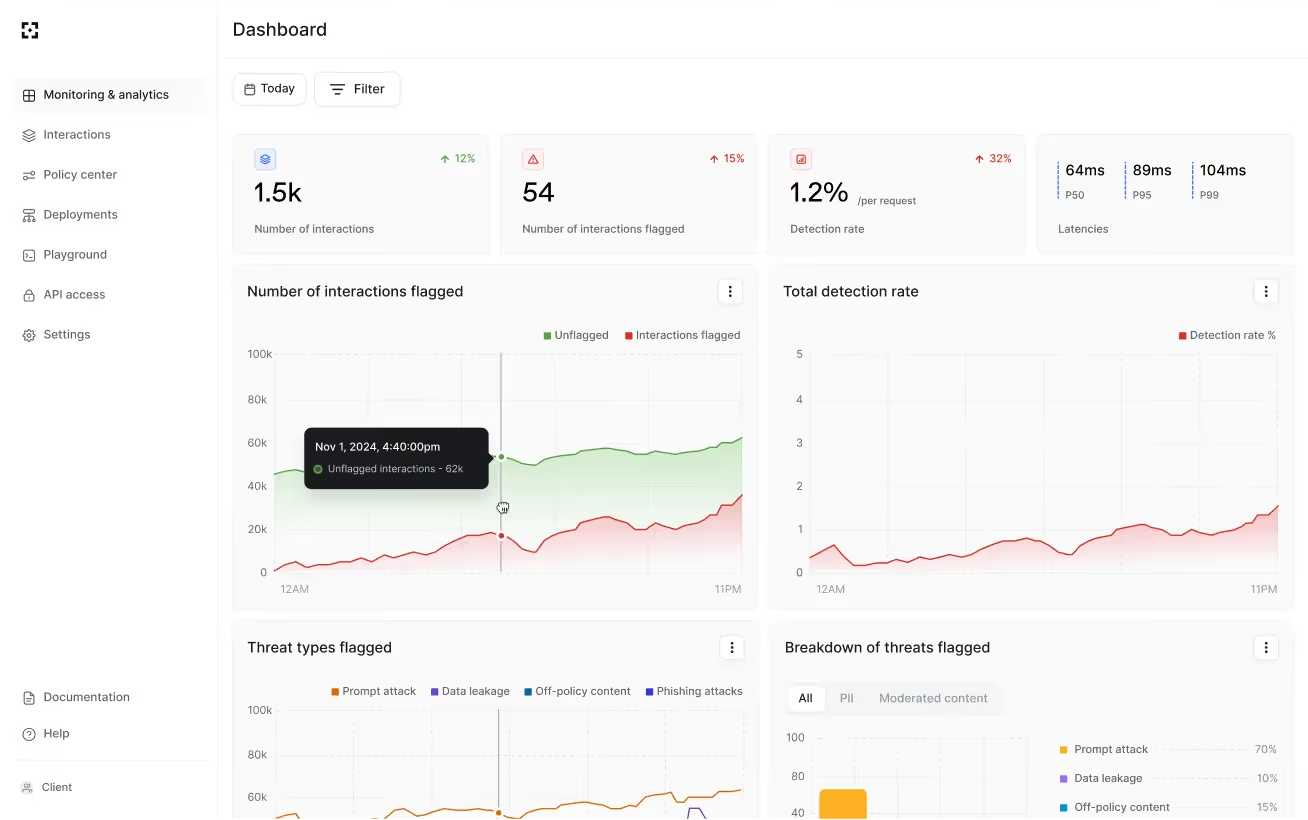

Lakera Guard is a runtime security platform designed to protect generative AI and LLM applications from prompt injection, data leakage, and harmful outputs. It adds guardrails at inference time, allowing teams to deploy GenAI applications with greater confidence.

Key features

- Real-time detection and blocking of prompt injection and jailbreak attempts

- Protection against sensitive data leakage in prompts and responses

- Harmful and unsafe content detection at inference time

- Lightweight integration with LLM applications using minimal code changes

- Threat detection informed by proprietary research and real-world LLM usage data

Pros

- Strong focus on inference-time and prompt-level protection

- Simple integration with existing LLM workflows

- Effective for securing customer-facing GenAI applications

Cons

- Limited customization for advanced or highly specific policies

- Pricing may be high for smaller teams or early-stage deployments

- Narrower scope compared to full AI governance or posture platforms

Use case: Lakera Guard is well-suited for organizations running LLM-powered chatbots, copilots, or agents that need immediate protection against prompt manipulation, unsafe outputs, and data leakage during live AI interactions.

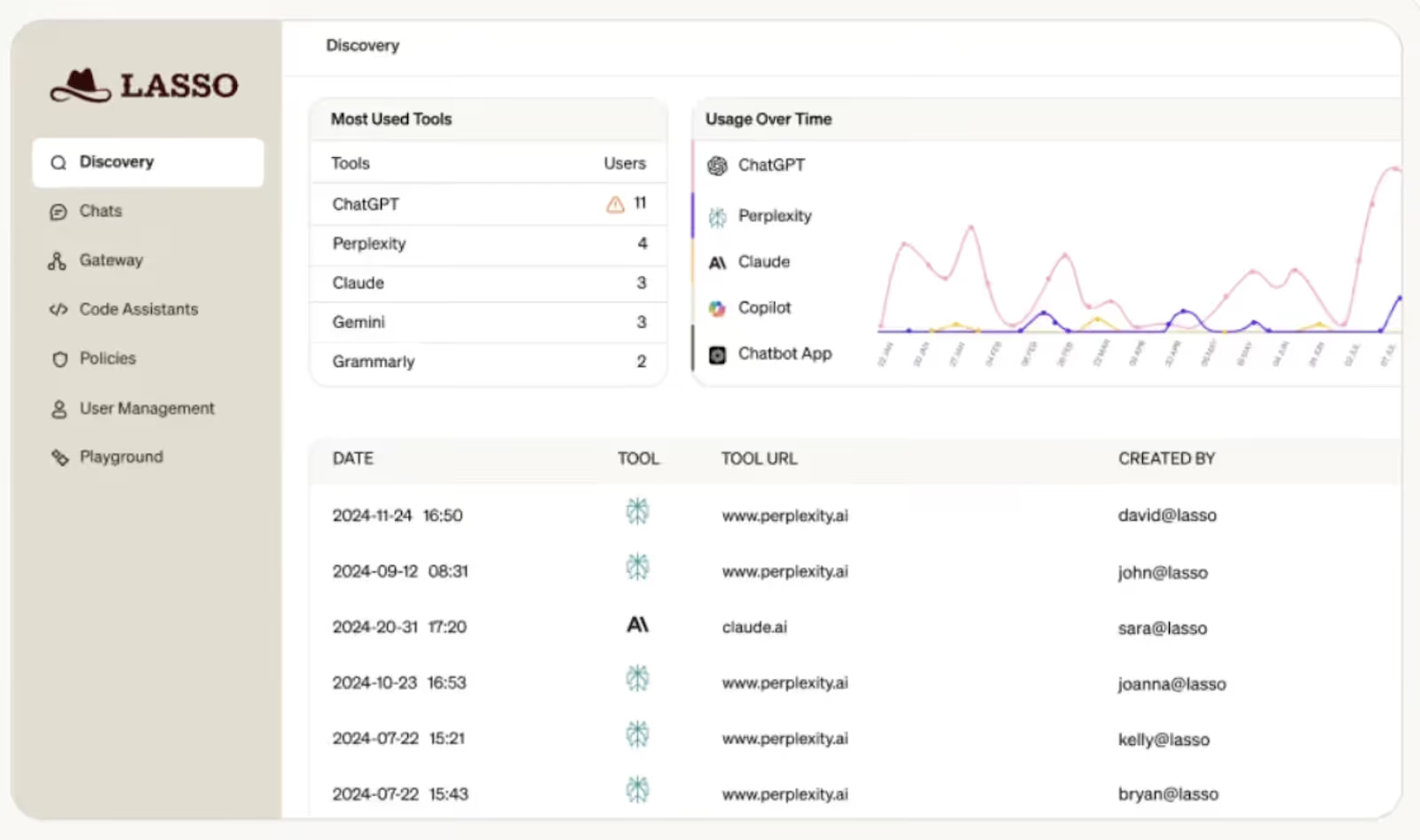

5. Lasso Security: AI Usage Control and Prompt Security Platform

Lasso Security is an AI security platform focused on controlling how employees use generative AI tools. It helps organizations prevent sensitive data exposure, enforce AI usage policies, and reduce risk from unsanctioned or unsafe interactions with LLM-based applications.

Key features

- Real-time inspection of prompts and responses to prevent data leakage

- Policy-based controls for approved and restricted AI usage

- Visibility into employee interactions with GenAI tools

- Detection of risky prompts and unsafe data-sharing behavior

- Lightweight deployment without disrupting productivity

Pros

- Strong focus on preventing sensitive data leakage via GenAI

- Simple policy enforcement for employee AI usage

- Effective for securing day-to-day AI interactions

Cons

- Limited coverage beyond prompt-level and usage controls

- Less suited for model-level or pipeline security

- Smaller feature set compared to full AI governance platforms

Use case: Lasso Security is best suited for organizations looking to control employee use of generative AI tools, reduce the risk of data leakage, and enforce safe AI usage policies without blocking adoption or slowing down teams.

6. Protect AI: Mobile Application Security Platform

Protectt.ai is a mobile application security platform designed to protect Android and iOS apps from runtime threats and fraud. It combines runtime application self-protection (RASP) and extended detection and response (XDR) to secure mobile apps in real time.

Key features

- Runtime Application Self-Protection (RASP) for Android and iOS apps

- Real-time threat detection and response for mobile app attacks

- Fraud prevention and protection against reverse engineering and tampering

- Compliance support for global security and privacy regulations

- Lightweight deployment with minimal impact on app performance

Pros

- Strong real-time protection for mobile applications

- Supports both Android and iOS platforms

- Fast deployment with minimal disruption to development cycles

Cons

- Primarily focused on mobile app security, not AI or LLM-specific risks

- Requires some technical expertise to configure and manage

- Limited relevance for organizations focused on SaaS or enterprise AI security

Use case: Protectt.ai is best suited for organizations that rely heavily on mobile applications and need runtime protection against mobile threats, fraud, and tampering, particularly in regulated or high-risk environments where mobile security is critical.

AI Security Posture Management

7. Aim Intelligence: AI Red Teaming and Runtime Safety Platform

Aim Security is an enterprise AI security platform focused on stress-testing, supervising, and protecting AI systems. It combines automated AI red teaming, runtime guardrails, and continuous supervision to help organizations identify vulnerabilities and enforce safety across production AI services.

Key features

- Automated AI red teaming with domain-specific attack simulation

- Real-time guardrails for prompt injection, jailbreaking, and data leakage

- Fast inference-time inspection with low latency for inputs and outputs

- Centralized AI supervision with monitoring and policy controls

- Continuous updates for new attack and defense techniques

Pros

- Deep expertise in AI attack research and adversarial testing

- Strong runtime protection with high detection accuracy

- Well-suited for complex, domain-specific AI deployments

Cons

- Primarily focused on model and prompt safety rather than SaaS governance

- May require AI-specific expertise to configure effectively

- Less emphasis on identity, access, or compliance workflows

Use case: Aim Security is ideal for organizations building or deploying advanced AI models that need rigorous red teaming, real-time protection, and continuous supervision to reduce the risk of prompt manipulation, unsafe outputs, and model misbehavior in production environments.

8. Noma Security: Unified Security for AI and Agents

Noma Security is a unified AI security and governance platform designed to protect AI models, pipelines, and autonomous agents. It provides end-to-end visibility, runtime protection, and compliance controls to help enterprises scale AI securely without slowing innovation.

Key features

- Continuous AI and agent discovery across the entire AI stack

- AI Security Posture Management (AI-SPM) with contextual risk insights

- Runtime protection against prompt injection, jailbreaks, and model abuse

- Governance and compliance controls aligned with emerging AI regulations

- Adaptive defenses for agentic and autonomous AI workflows

Pros

- Strong end-to-end visibility across AI infrastructure, models, and agents

- Purpose-built for agentic AI and rapidly evolving AI attack surfaces

- Well-suited for regulated and enterprise-scale environments

Cons

- Platform breadth may be heavy for early-stage AI teams

- Focused on AI security rather than broader SaaS or identity governance

- Implementation may require close collaboration between SecOps and MLOps

Use case: Noma Security fits enterprises running production GenAI and autonomous agents that need centralized visibility, real-time protection, and governance across the full AI lifecycle, especially in regulated industries where compliance, auditability, and agent behavior control are critical.

SOC / Detection Platforms Using AI

9. Crowdstrike Falcon

CrowdStrike Falcon with AI Analyst is an agentic security platform that uses AI-powered agents to automate detection, investigation, and response. Built on the Enterprise Graph, it unifies endpoint, identity, cloud, and AI security to accelerate SOC operations at enterprise scale.

Key features

- Enterprise Graph: AI-ready data layer unifying telemetry across the enterprise

- Charlotte AI Analyst: Automates alert triage, investigations, and response workflows

- Mission-ready agents: Prebuilt agents to handle repetitive SOC tasks end to end

- AgentWorks (no-code): Build, deploy, and govern custom security agents using natural language

- Unified console: Persona-aware dashboards with natural language querying

Pros

- Industry-leading detection and response coverage (validated by MITRE)

- Significant SOC efficiency gains through automated investigations

- Strong ecosystem spanning endpoint, identity, cloud, and AI security

Cons

- Platform breadth can be complex for smaller security teams

- Premium pricing compared to point security tools

- Best value realized when consolidating multiple security functions

Use case: CrowdStrike Falcon with AI Analyst is ideal for large and mid-market enterprises running high-volume SOC operations that want to automate alert triage, reduce analyst fatigue, and unify endpoint, cloud, identity, and AI security under a single agentic platform.

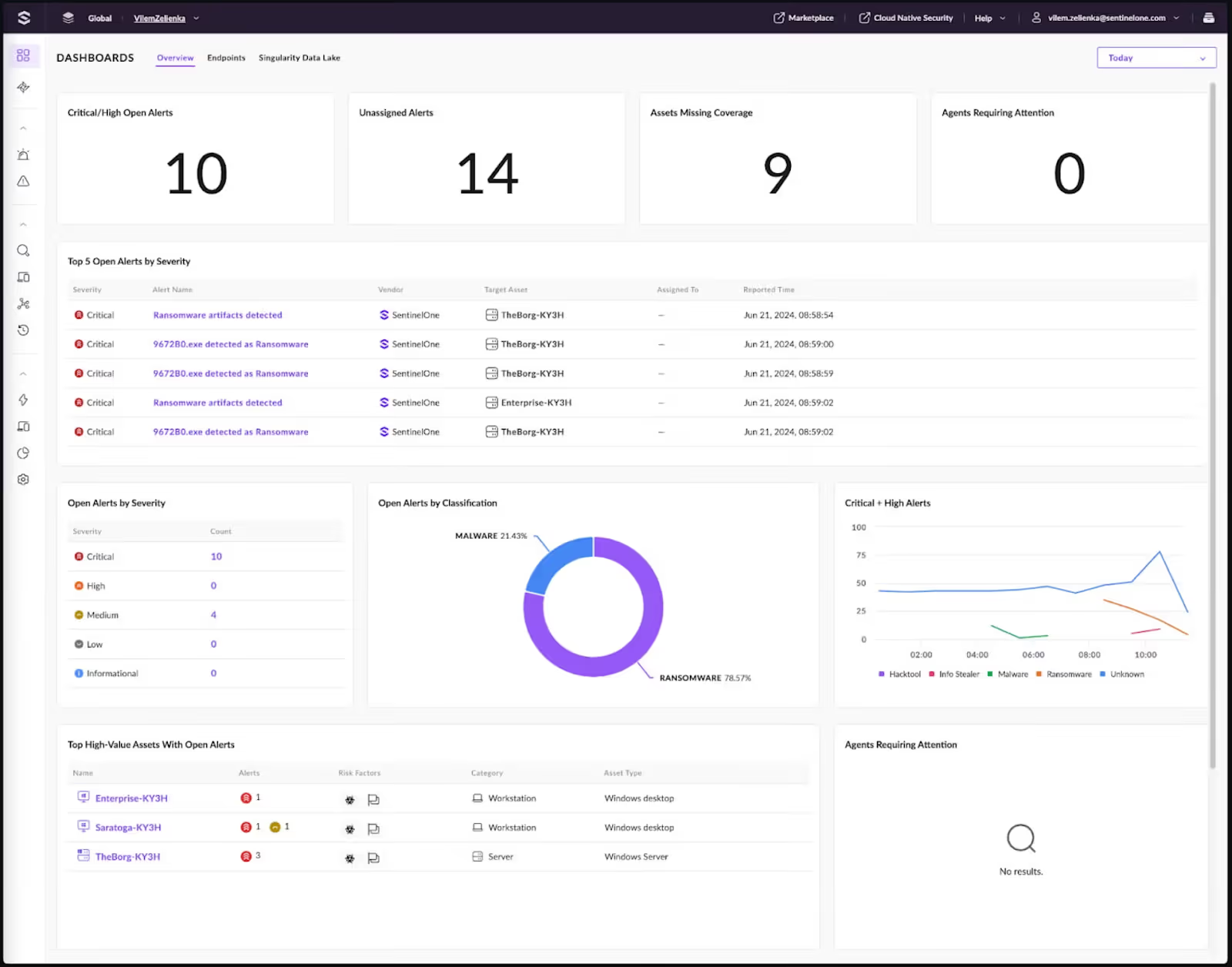

10. SentinelOne Singularity

SentinelOne Singularity is an autonomous, AI-powered cybersecurity platform that unifies endpoint, cloud, identity, and SIEM protection. It uses agentic and generative AI to prevent, detect, and respond to threats in real time, reducing analyst workload while stopping attacks before they spread.

Key features

- Autonomous endpoint protection: Real-time prevention, detection, and response without human intervention

- Singularity Cloud (CNAPP): Unified cloud security across workloads, identities, and configurations

- Purple AI: Generative and agentic AI that accelerates investigations and threat hunting

- AI-SIEM: High-speed ingestion, correlation, and response powered by AI

- Unified Singularity platform: Single console for endpoint, cloud, identity, and AI-driven security

Pros

- Industry-leading autonomous detection with strong MITRE ATT&CK performance

- Reduces SOC workload through AI-driven investigations and response

- Unified platform spanning endpoint, cloud, identity, and SIEM

Cons

- Premium pricing compared to standalone endpoint tools

- Platform depth can feel complex for small or early-stage teams

- Best value requires broader platform adoption, not point use

Use case: SentinelOne is well-suited for mid-market and enterprise organizations looking to consolidate endpoint, cloud, and SIEM security while using AI to stop threats autonomously, speed up investigations, and reduce dependency on manual SOC workflows.

The Core AI Security Risks Enterprises Face

AI risk doesn’t live in one place. It shows up at the moment of interaction, deep inside models and pipelines, and through the people and identities connected to those systems.

Understanding where risk originates makes it easier to decide what needs protection first.

1. Interaction-Level Risks: Where AI Meets Users and Systems

This is the most visible risk surface. Every prompt, response, and tool call becomes a potential control failure if it isn’t governed in real time.

- Prompts can be crafted to override instructions or extract sensitive context

- Outputs can unintentionally expose confidential data or regulated information

- Autonomous agents may execute actions that exceed their intended scope

- Seemingly normal queries can combine context in ways that reveal more than expected

These risks appear only at inference time, which is why static testing and pre-deployment checks rarely catch them.

2. Model and Pipeline Risks: What Happens Before and Behind the Scenes

AI systems rely on complex pipelines that pull in external components, data, and models. Small gaps here can create long-term exposure.

- Training data can be poisoned to influence future behavior

- Pre-trained or open-source models may contain hidden backdoors

- Model updates can drift from approved behavior without clear signals

- Inference and training pipelines may run with excessive permissions or weak isolation

Because these risks accumulate quietly, they often go unnoticed until outputs or decisions start causing harm.

3. Identity and Insider Risks: Who AI Acts For, and On Behalf Of

AI systems amplify access. When identity controls are loose, AI accelerates misuse rather than containing it.

- AI assistants may have broader access than the user expects

- Permissions granted for one role or project can persist indefinitely

- Insider misuse becomes harder to detect when actions are routed through AI tools

- AI-generated actions can blur accountability across teams and systems

In these scenarios, the issue isn’t malicious intent alone. It’s the lack of continuous alignment between identity, access, and actual usage.

The 4 Pillars of AI Security Platforms

Effective AI security doesn't come from one control bolted onto your stack. It comes from four capabilities working in sequence: discovery feeding into protection, protection feeding into governance, governance feeding into response. Miss one and the others don't hold.

Here's what each pillar actually means in practice.

Pillar One: Knowing What AI Exists

You can't secure what you can't see.

Models live inside SaaS apps, copilots, internal notebooks, data pipelines, and vendor tools often without formal IT approval.

Security teams are frequently the last to know a new AI feature shipped inside an existing platform. By the time it's flagged, it's already in active use.

AI security platforms solve this by maintaining a continuous, living inventory of AI assets across the environment:

- Models in production and in development

- Agents running automated workflows

- Datasets feeding model outputs

- APIs exposing AI capabilities to external systems

- Embedded AI features inside business applications (the ones that rarely get flagged)

The goal isn't a one-time audit. It's always-on visibility. When a new AI tool shows up: sanctioned or not, it surfaces immediately, along with what data it touches and who's using it.

Pillar Two: Controlling Behavior at Runtime

Most AI security failures don't happen during development.

They happen during live interaction, when prompts change, context shifts, and agents make decisions in real time that nobody anticipated.

Think about what that looks like in practice:

A finance copilot gets prompted in a way that extracts data it was never supposed to surface. An agent with write access to a CRM interprets an ambiguous instruction and modifies records it shouldn't touch. A customer-facing chatbot drifts outside its intended scope because nobody built an output boundary.

Runtime protection is the layer that catches this.

Platforms inspect inputs and outputs as they happen, enforce policy at the point of interaction, and limit what agents can access or execute mid-task. This isn't a firewall. It's behavioral enforcement at inference time active while the model is running, not just when it's deployed.

Pillar Three: Turning Policy Into Proof

Most organizations have an AI governance policy. Very few can prove it's actually being followed.

Documentation isn't enforcement.

A policy that says "all AI models accessing customer data must be reviewed quarterly" means nothing if there's no log showing the review happened, no record of who accessed what, and no audit trail connecting the policy to actual system behavior.

This pillar is about closing that gap. AI security platforms track:

- How models were trained and on what data

- Who accessed which models and when

- What outputs were generated and whether they triggered any policy flags

- Whether access controls were applied consistently across the environment

The output isn't a report for a compliance checkbox. It's evidence – the kind that holds up in an internal audit, a regulatory review, or a post-incident investigation.

Pillar Four: Acting on Risk Automatically

Detection without response is just an expensive alert system.

The challenge with AI risk is velocity. An agent can execute dozens of actions in the time it takes a human to review a single alert.

By the time a security analyst sees a flag, the downstream impact may already exist.

AI security platforms correlate signals across models, identities, data, and infrastructure to identify anomalous behavior and then act on it:

- A prompt injection attempt triggers an automatic block before the model responds

- An agent exceeding its authorized scope gets isolated mid-task

- A model accessing an unusual data source initiates a workflow for review

The goal isn't to remove human judgment. It's to compress the time between detection and containment so that human judgment happens before damage compounds, not after.

How to Choose the Right AI Security Platform

Focus on control, context, and coverage, not marketing claims. Use these criteria to make a clean call.

- Start with visibility: You should see every AI asset in use: models, agents, prompts, datasets, pipelines, and third-party tools. If it can’t discover shadow AI, it can’t secure it.

- Check runtime enforcement: Look for real-time guardrails at inference time. Policies must block unsafe prompts, data leakage, and unauthorized actions as they happen, not after the fact.

- Assess AI-native threat coverage: The platform should explicitly handle prompt injection, jailbreaks, model misuse, agent overreach, and data poisoning. Generic AppSec or CSPM isn’t enough.

- Verify governance and auditability: You need provable controls: policy versioning, decision logs, usage history, and clear evidence for audits tied to frameworks like NIST AI RMF or the EU AI Act.

- Evaluate identity and access context: Strong platforms understand who invoked the AI, what data was accessed, and which actions were triggered across users, agents, and service accounts.

- Prioritize automation over dashboards: Detection alone creates noise. Choose platforms that automate remediation, policy enforcement, and response without slowing development teams.

- Fit to your AI maturity: Early GenAI adoption needs fast guardrails and discovery. Advanced, agent-heavy environments need continuous monitoring, red teaming, and lifecycle governance.

FAQs

1. How can AI be used for security?

AI strengthens security by detecting patterns humans miss, correlating signals across large datasets, and responding faster than manual workflows. It’s used for threat detection, behavior analysis, anomaly detection, automated response, fraud prevention, and securing AI systems themselves at runtime.

2. What are the 5 best AI platforms?

There’s no single “best” list for every environment, but in 2026, these platforms are commonly evaluated for enterprise AI security:

- CloudEagle.ai

- Noma Security

- Cranium

- Aim Security

- CrowdStrike

Each focuses on different layers, such as governance, runtime protection, detection, or enterprise-wide threat response.

3. What is the best AI for security?

There isn’t a universal “best.” The right choice depends on what you’re securing:

- AI workflows and agents → AI-native security platforms

- Endpoints and infrastructure → AI-driven XDR platforms

- Governance and compliance → AI security posture and governance tools

The best platform is the one that matches your risk surface, AI maturity, and regulatory needs.

4. Which company is leading in AI security?

Leadership varies by category. Some companies lead in AI governance and runtime protection, others in AI-powered detection and response. The market is still evolving, and most enterprises use a combination rather than relying on a single vendor to cover all AI risks.

.avif)

%201.svg)

.avif)

.avif)

.avif)

.png)