HIPAA Compliance Checklist for 2025

It's 2:47 AM on a Tuesday.

Nobody is at a keyboard. But inside your network, an AI agent is moving. It's reading emails, accessing cloud storage, executing API calls, and making decisions on its own. It was deployed three months ago to automate procurement workflows. Nobody updated its permissions since. Nobody thought to.

At 2:51 AM, it receives a task injected through a malicious prompt buried inside a vendor email it was asked to summarize. The agent doesn't question it. It was told to be helpful. It executes.

By morning, customer records are sitting on an attacker's server.

The firewall logs show nothing unusual. No one touched a single credential. That's not science fiction. That's the threat model your stack is up against right now.

And here's the part most CISOs miss: agentic AI security risks aren't a future problem. 88% of enterprises reported an AI agent security incident in the last twelve months. Only 21% had runtime visibility into what their agents were doing.

This article is about closing that gap. We'll walk through the seven agentic AI security risks that map to real breach incidents, the controls that actually stop them, and the framework leading enterprises are already converging on.

TL;DR

- 88% of enterprises reported an AI agent security incident last year. Only 6% of security budgets are allocated to agentic AI risk.

- The seven agentic AI security risks every CISO needs to govern: memory poisoning, tool misuse, identity and privilege abuse, cascading failures, supply chain compromise, goal hijack, and untraceable data leakage.

- Traditional IAM, DLP, and SIEM tools weren't built for autonomous software that reasons, remembers, and acts on its own.

- A workable agentic AI security framework runs in three phases: pre-deployment, pre-launch, and runtime.

- CloudEagle.ai gives CISOs a unified view of every agent identity, scopes permissions automatically, and revokes risky access before it cascades.

1. Why Your Existing Security Stack Can't See This

Your current stack was built for software that does what it's told.

Agents are not that.

They interpret goals. They plan multi-step actions. They call tools and chain APIs. They hold memory between sessions and act without per-action human review.

McKinsey calls them "digital insiders": entities that operate inside your systems with privilege and authority, capable of unintentional harm, or deliberate damage if compromised.

Three architectural shifts make agentic AI security risks fundamentally different from anything your SOC has handled before:

- Agents follow instructions in the content they process. That makes them useful. It also makes them exploitable. A prompt buried inside a vendor email or a poisoned PDF can redirect an agent's goals without triggering a single endpoint alert.

- Agents operate using credentials. They authenticate as service identities. They hold OAuth tokens. They chain tool calls through APIs that your IAM platform doesn't govern. According to a recent Okta survey, only 10% of organizations have a developed strategy for managing non-human and agentic identities.

- Failures cascade. A single compromised agent in a multi-agent workflow can spread bad data and false instructions across every connected system before a human reviews the first log line.

So the question for CISOs isn't "do we have a tool for this?"

It's "Do we have a governance model designed for software that thinks, acts, and remembers on its own?"

For most enterprises, the honest answer is no. Which brings us to the seven agentic AI security risks that should already be on your radar.

2. The 7 Agentic AI Security Risks Every CISO Needs to Govern

These are the seven risks that map directly to enterprise breach incidents in 2025 and 2026, drawn from the OWASP and McKinsey's emerging risk taxonomy.

Each one is a distinct failure mode. Treating agentic AI security risks as a single category is exactly how enterprises end up with the wrong controls in the wrong places.

1. Memory poisoning

Agents remember. That memory is an attack surface.

Adversaries inject malicious data through indirect prompt injection. The agent then carries that corrupted context into every future decision, including decisions affecting other users and other agents.

Why this is different: traditional applications are stateless. Compromise hits one session. With agents, a single poisoned memory entry persists for days or weeks.

Anthropic research demonstrated that as few as 250 malicious documents can poison a large language model. The barrier to exploitation is low. The blast radius is long.

2. Tool misuse

Agents call tools to get work done. APIs. Databases. Code execution environments. MCP servers.

Now imagine an attacker who chains a permitted data retrieval function with a poorly sandboxed code execution tool. They exfiltrate data through pathways no individual security control was designed to anticipate.

The OWASP Agentic Security Initiative ranks tool misuse among the top three concerns for agentic deployments, alongside memory poisoning and identity abuse.

3. Identity and privilege abuse

This is the most common agentic AI security risk by a wide margin.

Most agents inherit human credentials or operate under shared service accounts. That single decision breaks attribution. It breaks the audit. It creates a privilege escalation path that no human user could exploit at agent speed.

The numbers tell the story. Post-incident analysis of 2025 and 2026 agent-involved breaches found 78% of agents had significantly broader permission scopes than their function required.

Why? Under delivery pressure, teams over-provision and intend to tighten permissions later.

Later rarely arrives.

4. Cascading failures across multi-agent systems

A flaw in one agent cascades to others.

Picture a healthcare scenario. A compromised scheduling agent escalates a fake clinical request. The clinical agent trusts the delegated authority. Patient data leaves the environment without a single security alert firing. Agent-to-agent trust mechanisms become the attack vector.

Cloudflare's November 2024 logging incident showed the same pattern at infrastructure scale: a single misconfiguration cascaded through backup systems and lost 55% of customer logs over 3.5 hours.

Now imagine that same failure mode running through 50 agents with privileged access.

5. Agentic supply chain compromise

Agents depend on a long chain of upstream components. Foundation models. Open-source frameworks. MCP servers. Plugins. Libraries.

Each one is an integrity gap.

A poisoned plugin or compromised model weight propagates silently to every deployment that pulls it in. Attackers only need to compromise one obscure component to gain access to an entire system acting on its own.

6. Goal hijack

Hidden prompts can redirect an agent's goals without breaking any access control.

The OWASP framework cites EchoLeak and similar exploits where copilots became silent exfiltration engines. The agent does exactly what the attacker wants while appearing to follow legitimate instructions. Goal hijack is now ranked the top OWASP agentic risk for 2026 (ASI01).

7. Untraceable data leakage

Agents talk to each other. They talk to APIs. They talk to external services.

Unless every data exchange is logged and audited, leaks become invisible.

McKinsey describes the pattern: an autonomous customer support agent shares unneeded PII with a fraud detection agent to resolve a query. The exchange is never logged. The breach happens. Nobody sees it.

If you can't answer "which agent accessed what data, on whose authority, and where did the output go" in minutes, you don't have agentic AI governance.

You have agentic AI exposure.

3. Agentic AI Risks and Controls: What Actually Stops Each One

Every agentic AI security risk above maps to a specific control. The trick is knowing which control matters most for which risk.

The table below pairs each agentic AI vulnerability with the control that addresses it directly.

The thread running through every control is the same: treat each agent as a distinct, identifiable workload. Give it its own credentials. Scope its permissions. Maintain its own audit trail. Build it with a kill switch.

The moment you treat agents as anonymous extensions of human users, every other control collapses, and so does your defense against agentic AI security risks.

CISA reinforces this in its careful adoption of agentic AI services guidance. The recommendations are concrete:

- Network segmentation by default.

- Human approval for high-risk actions.

- Tamper-proof audit logs retained for at least 90 days.

Once you have this mapping, the next question is how to operationalize it. That's where most enterprises get stuck.

4. The Agentic AI Security Framework CISOs Are Converging On

There's no shortage of frameworks: OWASP, NIST AI RMF, McKinsey's playbook, the EU AI Act, and FINRA. Each one captures part of the picture for managing agentic AI security risks.

When you map them side by side, the same three-phase structure shows up everywhere.

Phase 1: Before deployment

This is foundation work. Get it wrong, and every downstream control becomes shaky.

- Update your AI policy and IAM to account for non-human autonomous actors.

- Extend third-party risk management to cover agents acquired from vendors.

- Define ownership for every agent. Every agent needs a named human accountable for its actions.

- Update your risk taxonomy. Frameworks like ISO 27001 and NIST CSF do not yet cover agents that act with discretion and adaptability.

Phase 2: Before launching a use case

This is where most enterprises drop the ball. They jump from policy to production without an inventory of what's actually being built.

Maintain a centralized portfolio of every agent in development or production. For each agent, document:

- The foundational model

- Hosting location and data sources accessed

- Criticality and data sensitivity

- Access rights and inter-agent dependencies

Without this inventory, you're managing risk for things you can't see.

Phase 3: During runtime

This is where everything you set up earlier actually does work.

- Authenticate agent-to-agent communication. No anonymous calls, ever.

- Apply IAM to agents the same way you do to humans, with input and output guardrails on top.

- Trace every action and decision in real time. This is the difference between detecting a breach in minutes versus weeks.

- Maintain a contingency plan that includes kill switches and isolated sandbox environments for every critical agent.

This framework isn't theoretical. The FINRA 2026 Oversight Report recommends explicit human checkpoints before agents act or transact.

The EU AI Act applies high-risk system requirements to many enterprise agent deployments. Each of these treats agentic AI security risks as material risks, not theoretical ones.

The regulatory direction is clear, even if the rules are still being finalized.

For a deeper walkthrough of governance specifically, see our guide on agentic AI governance challenges and best practices. It covers role-based privilege segmentation and lifecycle controls in more depth than fits here.

5. How CloudEagle.ai Helps CISOs Govern Agentic AI Security Risks

CloudEagle.ai is an AI-powered SaaS management, security, and identity governance platform that gives enterprises a single command center to discover, govern, and secure both human and non-human identities across their SaaS and AI ecosystem.

Agentic AI security risks live in the space between human-centric IAM tools and the autonomous agents your teams are deploying. CloudEagle.ai closes that space.

Here's how, mapped to the risks above.

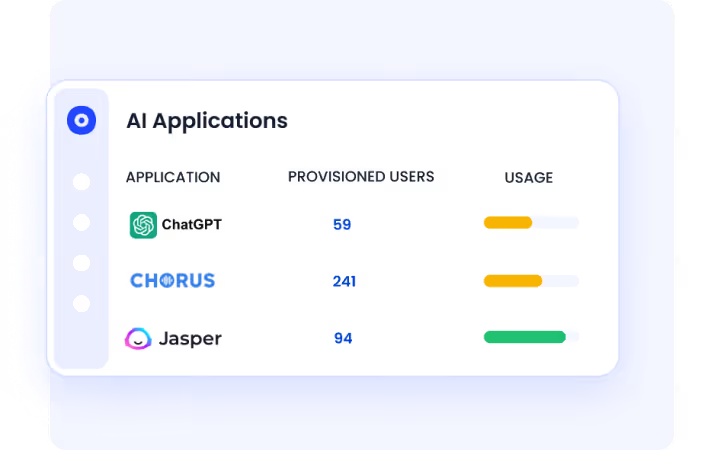

a) Discover every AI agent and tool, including the ones bypassing IT

Most agentic AI security risks start as shadow deployments. Teams spin up agents and AI tools outside IT visibility, often using personal accounts or OAuth tokens that never touch SSO. You can't govern what you can't see.

CloudEagle.ai solves this by discovering every AI tool and agent across your environment, correlating login activity, browser data, OAuth signals, and expense logs to build a complete inventory. This includes the agents and tools that traditional IAM platforms miss entirely.

The result is complete visibility into every AI agent in your stack before risk turns into an incident.

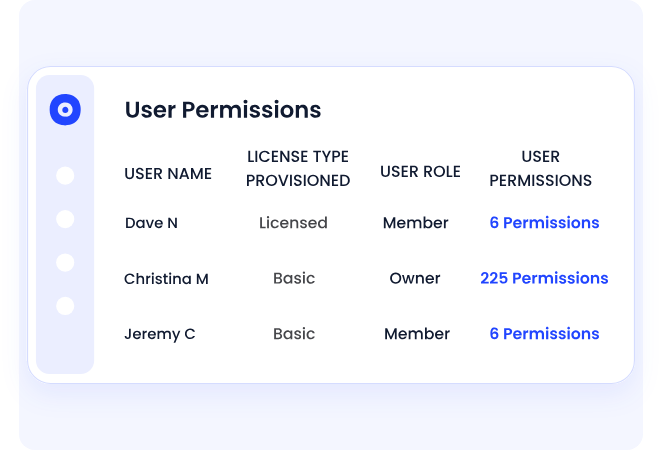

b) Govern non-human and agent identities the same way you govern humans

Agents inherit human credentials or run on shared service accounts. That breaks attribution, breaks audit, and makes privilege escalation almost invisible.

CloudEagle.ai fixes this by treating every agent and service account as a first-class identity with defined ownership, a scoped permission boundary, and a full activity log. Once that's in place, every agent action becomes attributable to a specific identity. Every privilege gets reviewed on a schedule. Orphaned identities stop accumulating.

Datastax saw exactly this shift when they brought CloudEagle.ai in to clean up fragmented access requests across their SaaS stack.

“We didn’t have a clear system to manage employee access. App requests came through different channels, access stayed active longer than needed, and ownership was unclear. CloudEagle helped us centralize access requests, apply time-bound controls, and bring structure to identity governance.”

~ Pushkala Pattabhiraman, Head of Security and Productivity, Datastax

Read the full story: How CloudEagle Streamlined App Access Requests with Policy-Based Automation

c) Enforce least privilege and just-in-time access.

Agents are routinely deployed with permissions far broader than their job requires. Under delivery pressure, teams over-provision and intend to clean it up later. Later doesn't come, and the over-permissioning becomes the breach path.

CloudEagle.ai prevents that by applying role-based and time-bound access controls across SaaS and AI tools. Permissions expand only when needed, then revoke automatically when the task is done.

The outcome is that agents operate on least privilege by default, and your audit posture stops depending on someone remembering to tighten access.

d) Continuously monitor agent activity and detect anomalies.

Many agentic AI security risks only show up at runtime. A poisoned memory entry or a hijacked goal looks normal in a static permissions review.

CloudEagle.ai catches what static reviews miss by continuously monitoring agent activity across your stack, flagging unusual access patterns, tool-usage spikes, and behavior that deviates from established baselines.

That's the difference between catching a cascading failure at the first agent and discovering it at the fiftieth.

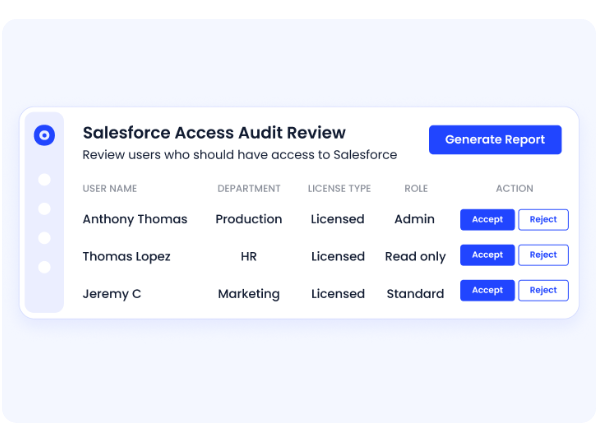

e) Automated access reviews and complete audit trails.

When auditors ask for proof, most teams scramble to reconstruct who had access to what and when. With agents, that reconstruction is nearly impossible after the fact.

CloudEagle.ai removes that scramble entirely by logging every access grant, revocation, and approval automatically. Reports generate on demand for SOC 2, HIPAA, and EU AI Act requirements.

Audits stop being a fire drill. The evidence is already there.

EagleEye, CloudEagle.ai's agentic AI layer, runs all of this in real time. It identifies overprivileged agents, initiates deprovisioning workflows, and surfaces compliance gaps before they reach a leadership review.

See how EagleEye runs the SaaS and AI lifecycle.

6. Don't wait for the first incident

Agentic AI is already inside your environment. The question is whether you can see it, govern it, and shut it down when something goes wrong.

The agentic AI security risks above won't wait for your next audit cycle. Book a demo with CloudEagle.ai and get visibility and control over every AI agent in your stack before the next incident becomes your incident.

7. FAQs

1. What are the vulnerabilities in agentic AI?

A: The main agentic AI vulnerabilities are memory poisoning, tool misuse, identity and privilege abuse, cascading failures, supply chain compromise, goal hijack, and untraceable data leakage.

2. Is it safe to use agentic AI?

A: Agentic AI is safe with identity-first controls and least privilege access. Without runtime monitoring, kill switches, and audit trails, agents become high-privilege insider threats.

3. Which risk is most associated with agentic AI systems?

A: Identity and privilege abuse. Studies show 78% of agents in 2025 to 2026 breaches had significantly broader permissions than their function required.

4. What is the risk control of agentic AI?

A: Core controls include unique cryptographic identity per agent, least privilege, scoped tool permissions, network segmentation, runtime monitoring, and human approval for high-impact actions.

.avif)

%201.svg)

.avif)

.avif)

.avif)

.png)